1. About This Guide

Welcome to the OpenNMS Horizon Administrators Guide. This documentation provides information and procedures on setup, configuration, and use of the OpenNMS Horizon platform. Using a task-based approach, chapters appear in a recommended order for working with OpenNMS Horizon:

-

Opt in or out of usage statistics collection (requirement during first login).

-

Create users and security roles.

-

Provision your system.

1.1. Audience

This guide is suitable for administrative users and those who will use OpenNMS Horizon to monitor their network.

1.2. Related Documentation

Installation Guide: how to install OpenNMS Horizon

Developers Guide: information and procedures on developing for the OpenNMS Horizon project

OpenNMS 101: a series of video training tutorials that build on each other to get you up and running with OpenNMS Horizon

OpenNMS 102: a series of stand-alone video tutorials on OpenNMS features

OpenNMS Helm: a guide to OpenNMS Helm, an application for creating flexible dashboards to interact with data stored by OpenNMS

Architecture for Learning Enabled Correlation (ALEC): guide to this framework for logically grouping related faults (alarms) into higher level objects (situations) with OpenNMS.

1.3. Typographical Conventions

This guide uses the following typographical conventions:

Convention |

Meaning |

|---|---|

bold |

Indicates UI elements to click or select in a procedure, and the names of UI elements like dialogs or icons. |

italics |

Introduces a defined or special word. Also used for the titles of publications. |

|

Anything you must type or enter, and the names for code-related elements (classes, methods, commands). |

1.4. Need Help?

-

join the OpenNMS Discussion chat

-

join our community on Discourse

-

contact sales@opennms.com to purchase customer support

2. Data Choices

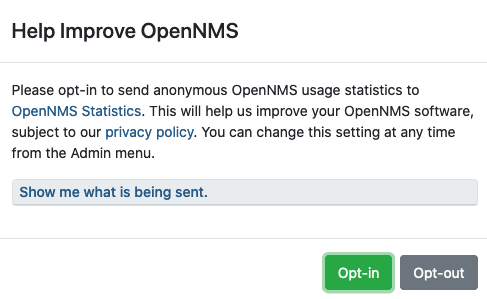

The first time a user with the Admin role logs into the system, a prompt appears for permission to allow the Data Choices module to collect and publish anonymous usage statistics to https://stats.opennms.org.

The OpenNMS Group uses this information to help determine product usage, and improve the OpenNMS Horizon software.

Click Show me what is being sent to see what information is being collected. Statisitcs collection and publication happen only once an admin user opts-in.

When enabled, the following anonymous statistics are collected and published on system startup and every 24 hours after:

-

System ID (a randomly generated UUID)

-

OpenNMS Horizon Release

-

OpenNMS Horizon Version

-

OS Architecture

-

OS Name

-

OS Version

-

Number of alarms in the

alarmstable -

Number of events in the

eventstable -

Number of IP interfaces in the

ipinterfacetable -

Number of nodes in the

nodetable -

Number of nodes, grouped by System OID

| You can enable or disable usage statistics collection at any time by choosing admin>Configure OpenNMS>Additional Tools>Data Choices and choosing Opt-in or Opt-out in the UI. |

3. User Management

Managing users involves the following tasks:

3.1. First-Time Login and Data Choices

Access the OpenNMS Horizon web application at http://<ip-or-fqdn-of-your-server>:8980/opennms.

The default user login is admin with the password admin.

The first time you log in we prompt for permission to allow the Data Choices module to collect and publish anonymous usage statistics to https://stats.opennms.org.

The OpenNMS Group uses this information to help determine product usage and to improve the OpenNMS Horizon software.

Click Show me what is being sent to see what information we collect. Statisitcs collection and publication happen only if an admin user opts in.

| Admin users can enable or disable usage statistics collection at any time by logging into the UI, clicking the gear icon, selecting Data Choices in the Additional Tools area, and clicking Opt-in or Opt-out. |

Data Collection

When enabled, the Data Choices module collects the following anonymous statistics and publishes them on system startup and every 24 hours after:

-

System ID (a randomly generated universally unique identifier (UUID))

-

OpenNMS Horizon Release

-

OpenNMS Horizon Version

-

OS Architecture

-

OS Name

-

OS Version

-

Number of alarms in the

alarmstable -

Number of events in the

eventstable -

Number of IP interfaces in the

ipinterfacetable -

Number of nodes in the

nodetable -

Number of nodes, grouped by System OID

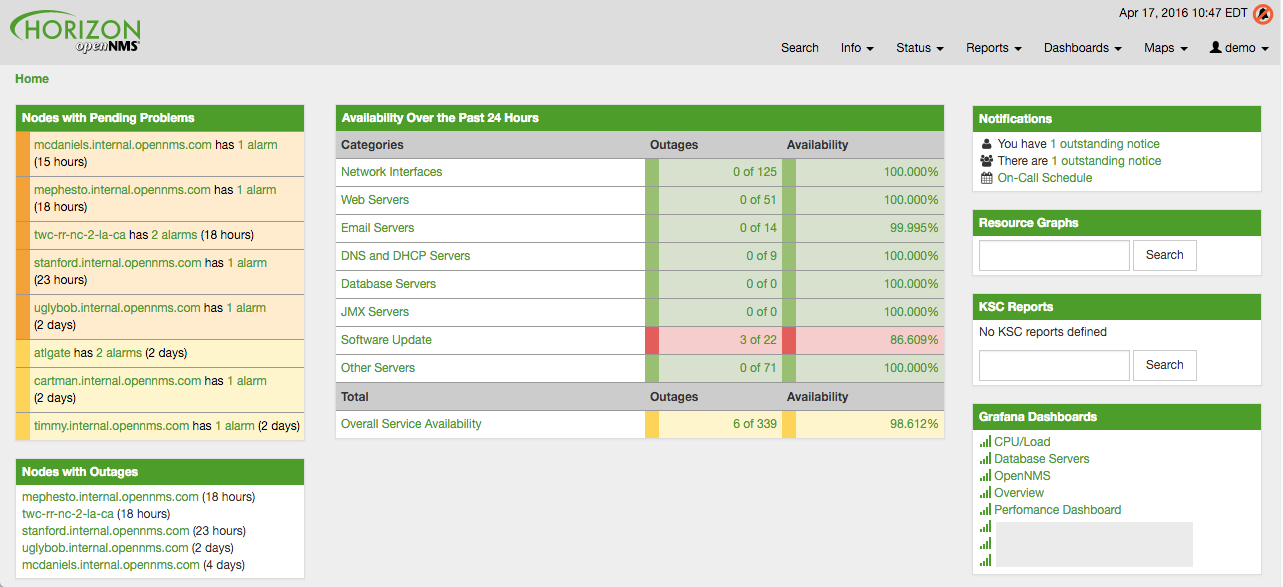

3.1.1. Admin User Setup

After logging in for the first time, make sure to change the default admin user password to a secure one:

-

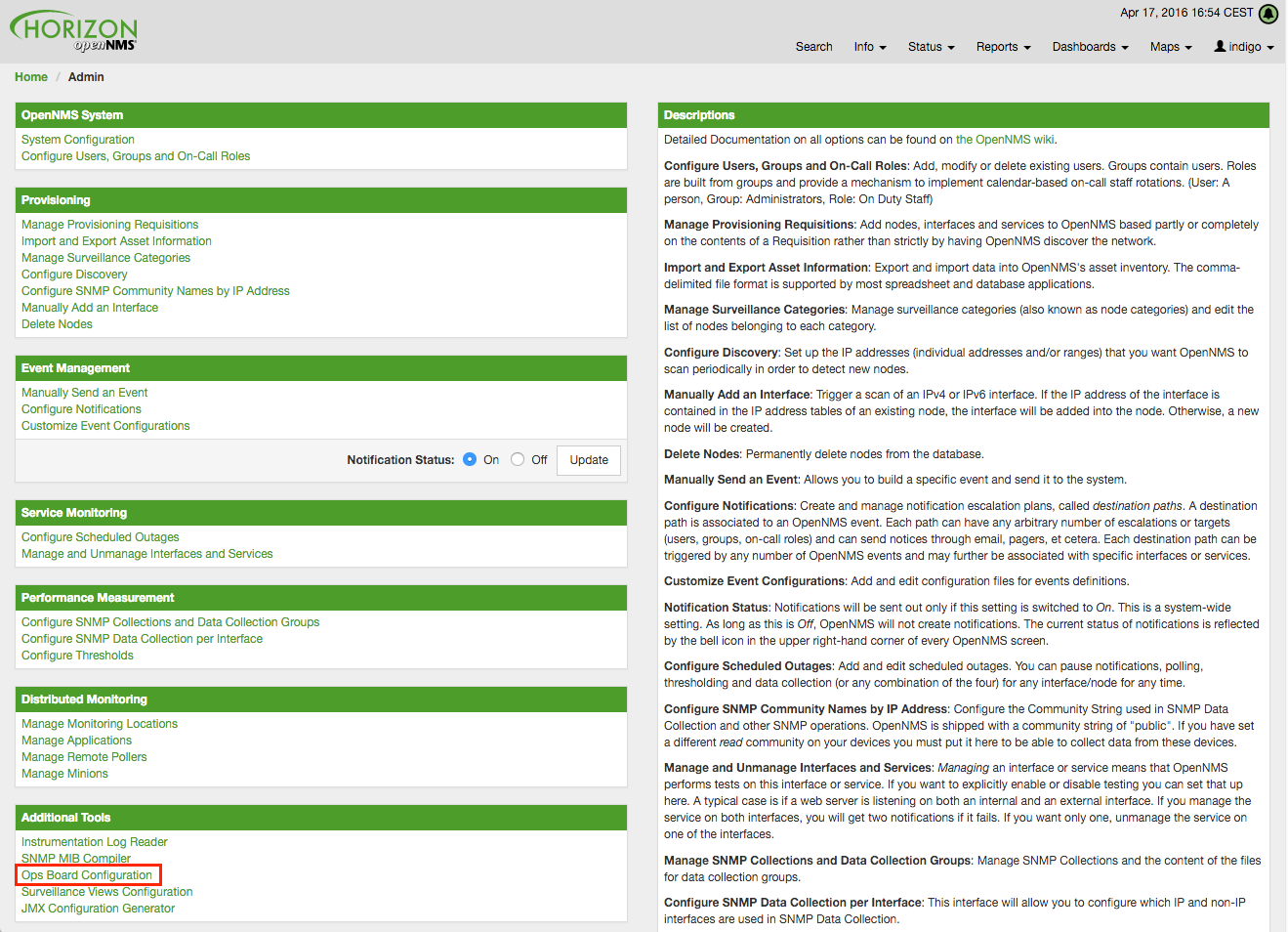

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users.

-

Click Modify beside the admin user.

-

In the User Password area, click Reset Password, update the password and click OK.

-

Click Finish at the bottom of the Modify User screen to save changes.

| Please note that angle brackets (<>), single (') and double quotation marks ("), and the ampersand symbol (&) are not allowed to be used in the user ID. |

We recommend not using the default admin user, but instead creating specific users with the admin role and/or other permissions.

This helps to keep track of who has performed tasks such as clearing alarms or creating notifications.

| Do not delete the default admin and rtc users. The rtc user is used for the communication of the Real-Time Console on the start page to calculate the node and service availability. |

3.2. User Creation and Configuration

Only a user with admin privileges can create users and assign security roles to them.

We recommend creating a new user with admin privileges instead of using the default admin (see Admin User Setup).

Ideally, each user account corresponds to a person, to help track who performs tasks in your OpenNMS Horizon system. Assigning different security roles to each user helps restrict what tasks the user can perform.

In addition to local users, you can configure external authentication services including LDAP / LDAPS, RADIUS, and SSO. Configuration specifics for these services are outside the scope of this documentation.

| Do not delete the default admin and rtc users. The rtc user is used for the communication of the Real-Time Console on the start page to calculate the node and service availability. |

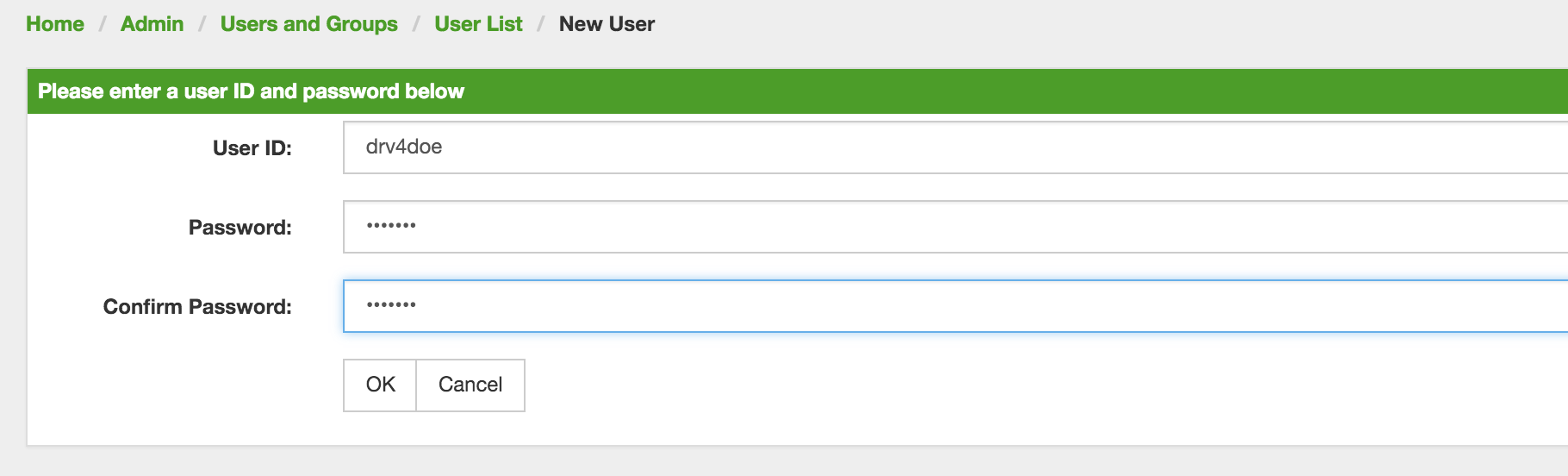

3.2.1. Creating a User

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users.

-

Click Add new user and specify a user ID, password, password confirmation and click OK.

| Please note that angle brackets (<>), single (') and double quotation marks ("), and the ampersand symbol (&) are not allowed to be used in the user ID. |

-

Optional: add user information in the appropriate fields.

-

Optional: assign user permissions.

By default a new user has the following permissions: Acknowledge and work with alarms and noficiations. Cannot access the configure OpenNMS administration menu. Add the ROLE_ADMIN role to create a new admin. -

Optional: specify where to send messages to the user in the notification information area.

-

Optional: set a schedule for when a user should receive notifications.

-

Click Finish to save changes.

3.2.2. Create User Duty Schedule

A duty schedule specifies the days and times a user (or group) receives notifications, on a per-week basis. This feature allows you to customize a schedule based on your team’s hours of operation. Schedules are additive: a user could have a regular work schedule, and a second schedule for days or weeks when they are on call.

If OpenNMS Horizon needs to notify an individual user, but that user is not on duty at the time, it will never send the notification to that user.

Notifications sent to users in groups are different:

-

group on duty at time of notification – all users also on duty receive notification

-

group on duty, no member users on duty – notification is queued and sent to the next user who comes on duty

-

off-duty group – notification never sent

To add a duty schedule for a user (or group), follow these steps:

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top-right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users (Configure Groups).

-

Choose the user (or group) you want to modify.

-

In the Duty Schedule area, select the number of schedules you want to add from the drop-down and click Add Schedule.

-

Specify the days and times during which you want the user (or group) to receive notifications.

-

Click Finish.

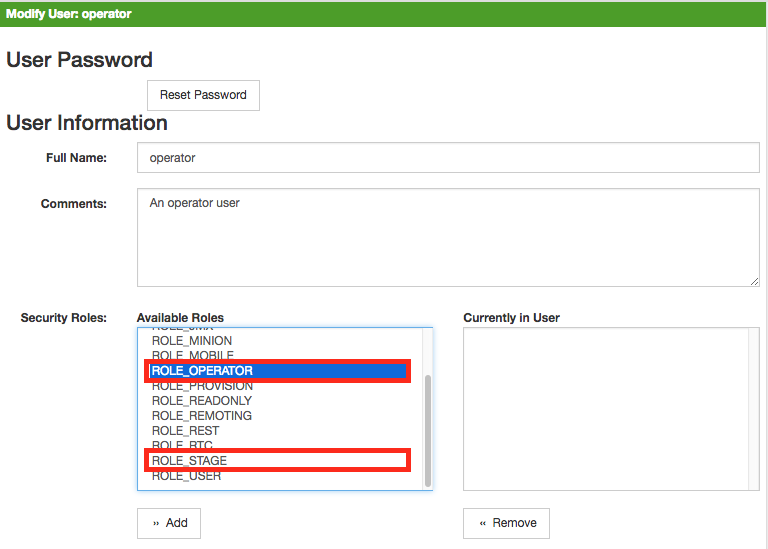

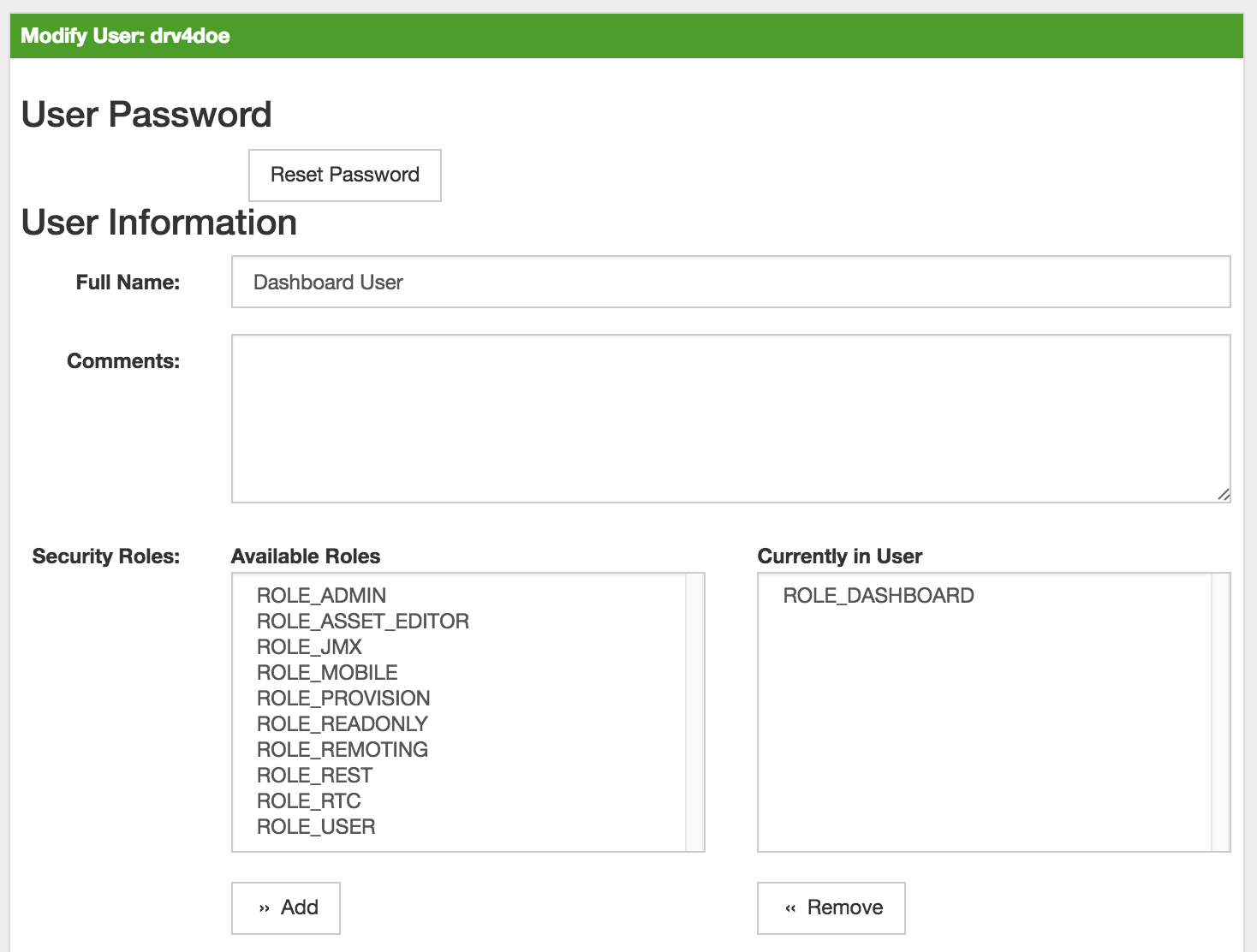

3.2.3. Assigning User Permissions

Create user permissions by assigning security roles. These roles regulate access to the web UI and the REST API to exchange monitoring and inventory information. In a distributed installation the Minion instance requires the ROLE_MINION permission to interact with OpenNMS Horizon.

Available security roles (those with an asterisk are the most commonly used):

| Security Role Name | Description |

|---|---|

ROLE_ADMIN* |

Permissions to create, read, update, and delete in the web UI and the ReST API. |

ROLE_ASSET_EDITOR |

Permissions only to update the asset records from nodes. |

ROLE_DASHBOARD |

Allow user access only to the dashboard. |

ROLE_DELEGATE |

Allow actions (such as acknowledging an alarm) to be performed on behalf of another user. |

ROLE_FLOW_MANAGER |

Allow user to edit flow classifications. |

ROLE_JMX |

Allow retrieving JMX metrics but does not allow executing MBeans of the OpenNMS Horizon JVM, even if they just return simple values. |

ROLE_MINION |

Minimum required permissions for a Minion to operate. |

ROLE_MOBILE |

Allow user to use OpenNMS COMPASS mobile application to acknowledge alarms and notifications via the REST API. |

ROLE_PROVISION |

Allow user to use the provisioning system and configure SNMP in OpenNMS Horizon to access management information from devices. |

ROLE_READONLY* |

User limited to reading information in the web UI; unable to change alarm states or notifications. |

ROLE_REPORT_DESIGNER |

Permissions to manage reports in the web UI and REST API. |

ROLE_REST |

Allow users to interact with the entire OpenNMS Horizon REST API. |

ROLE_RTC* |

Exchange information with the OpenNMS Horizon Real-Time Console for availability calculations. |

ROLE_USER* |

Default permissions for a new user to interact with the web UI: can escalate and acknowledge alarms and notifications. |

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users.

-

Click the modify icon next to the user you want to update.

-

Select the role from Available Roles in the Security Roles section.

-

Click Add to assign the security role to the user.

-

Click Finish to apply the changes.

-

Log out and log in to apply the new security role settings.

3.2.4. Creating custom securitry roles

To create a custom security role you need to define the name and specify the security permissions.

-

Create a file called

$OPENNMS_HOME/etc/security-roles.properties. -

Add a property called

roles, and for its value, a comma-separated list of the custom security roles, for example:

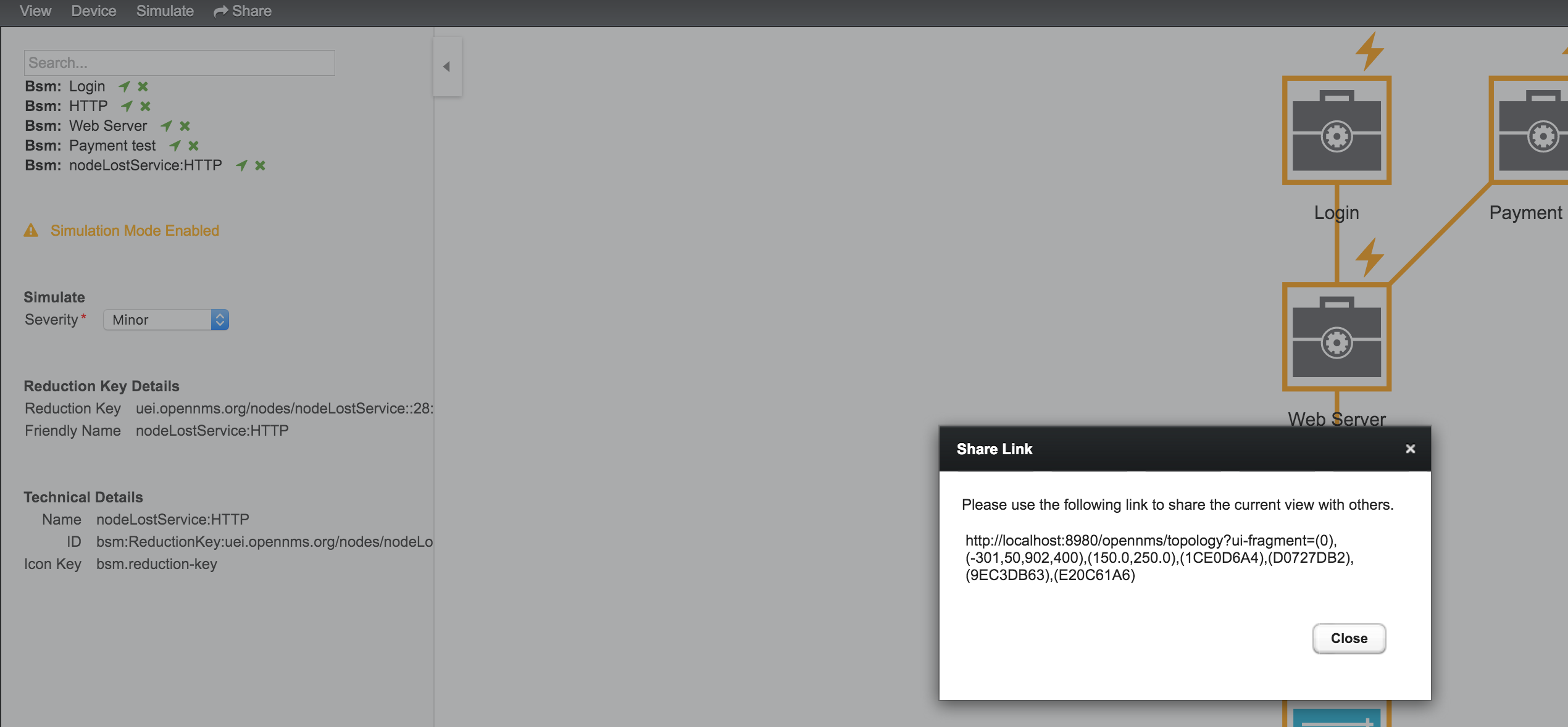

roles=operator,stageThe new custom security roles will appear in the web UI:

To define permissions associated with the custom security role, manually update the application context of the Spring Security here:

/opt/opennms/jetty-webapps/opennms/WEB-INF/applicationContext-spring-security.xml3.3. Groups

A group is a collection of users. Organizing users into groups helps with notifications and allows you to assign a set of users to on-call roles to build more complex notification workflows.

3.3.1. Creating a User Group

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Groups.

-

Specify a group name and description and click OK.

| Please note that angle brackets (<>), single (') and double quotation marks ("), and the ampersand symbol (&) are not allowed to be used in the group name. |

-

Add users to the group by selecting them from the Available Users column and using the arrows to move them to the Currently in Group column.

-

(Optional) Assign categories of responsibility to the group, such as Routers, Switches, Servers, etc.

-

(Optional) Create a duty schedule.

-

Click Finish.

| Users will receive notifications in the order in which the user appears in the group. |

| If you delete a user group, no one receives notification that the group has been deleted. If the group is associated with a schedule, that schedule will no longer exist, and users associated with that group will no longer recieve notifications previously specified in the schedule. |

The on-call roles feature allows you to assign a predefined duty schedule to an existing group of users. A common use case is to have system engineers in on-call rotations with a given schedule.

Each on-call role includes a user designated as a supervisor, who receives notifications when no one is on duty to receive OpenNMS Horizon notifications.

The supervisor must have admin privileges.

3.4. Assigning a Group to an On-Call Role

Before assigning a group to an on-call role, you must create a group.

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure On-Call Roles.

-

Click Add New On-Call Role and specify a name, supervisor, group and description.

-

Click Save.

-

In the calendar, click the plus (+) icon on the day for which you want to create a schedule.

-

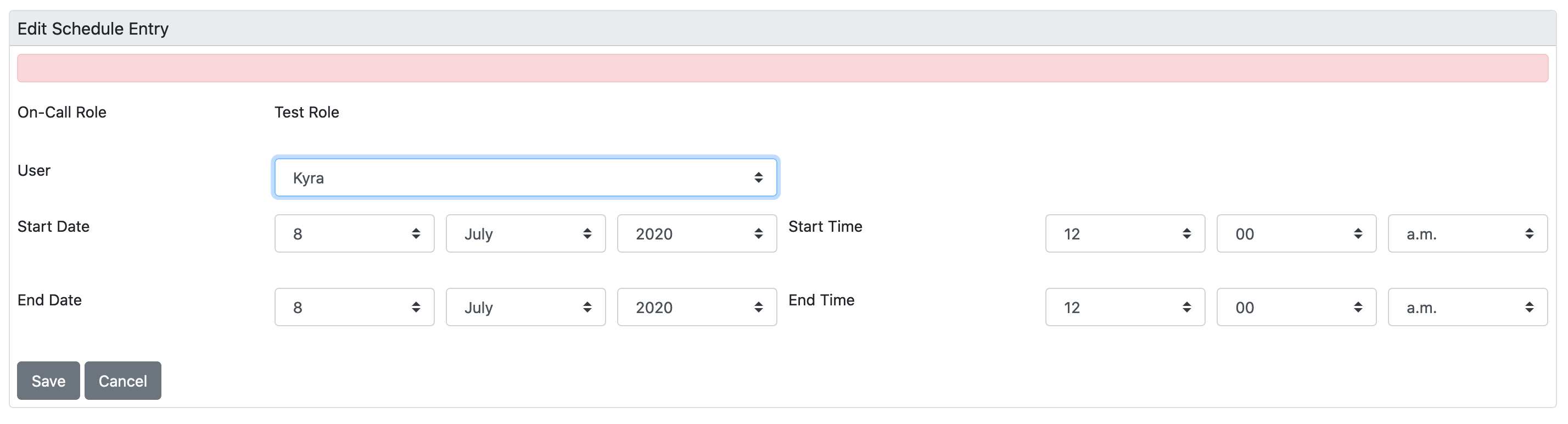

Specify the user, date, and time the user should be on call and click Save:

-

Repeat for other days and users.

-

Click Done to apply the changes.

3.5. User Maintenance

User maintenance describes additional tasks and information related to users.

3.5.1. Passwords

-

Log in as a User with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users.

-

Click the Modify icon next to an existing user and select Reset Password.

-

Type a new Password, Confirm Password, and click OK.

-

Click Finish.

-

Log in with user name and old password.

-

Choose Change Password from the drop-down below your login name.

-

Specify your current password then set the new password and confirm it.

-

Click Submit.

-

Log out and log in with your new password.

3.5.2. Deleting users and groups

-

Log in as a user with administrative permissions.

-

Click the gear icon in the top right.

-

Choose Configure OpenNMS → Configure Users, Groups and On-Call roles and select Configure Users (Configure Groups).

-

Click the trash bin icon beside the user (or group) you want to delete.

-

Confirm delete request with OK.

| When you delete a group no one receives notification that the group has been deleted. Be aware that deleting a group or user also removes any schedules associated with that group or user, meaning they will not receive notifcations specified as part of a schedule. |

3.5.3. Advanced Configuration

OpenNMS Horizon persists the user, password, and other detail descriptions in the users.xml file.

3.6. Web UI Pre-Authentication

It is possible to configure OpenNMS Horizon to run behind a proxy that provides authentication, and then pass the pre-authenticated user to the OpenNMS Horizon webapp using a header.

Define the pre-authentication configuration in $OPENNMS_HOME/jetty-webapps/opennms/WEB-INF/spring-security.d/header-preauth.xml. This file is automatically included in the Spring Security context, but is not enabled by default.

| DO NOT configure OpenNMS Horizon this way unless you are certain the web UI is accessible only to the proxy and not to end users. Otherwise, malicious attackers can craft queries that include the pre-authentication header and get full control of the web UI and REST APIs. |

3.6.1. Enabling Pre-Authentication

Edit the header-preauth.xml file, and set the enabled property:

<beans:property name="enabled" value="true" />3.6.2. Configuring Pre-Authentication

You can also set the following properties to change the behavior of the pre-authentication plugin:

| Property | Description | Default |

|---|---|---|

|

Whether the pre-authentication plugin is active. |

|

|

If true, disallow login if the header is not set or the user does not exist. If false, fall through to other mechanisms (basic auth, form login, etc.) |

|

|

The HTTP header that will specify the user to authenticate as. |

|

|

A comma-separated list of additional credentials (roles) the user should have. |

4. Provisioning

4.1. Introduction

Provisioning is a mechanism to import node and service definitions either from an external source such as DNS or HTTP or via the OpenNMS Horizon web UI. The Provisiond daemon maintains your managed entity inventory through policy-based provisioning.

Provisiond comes with a RESTful Web Service API for easy integration with external systems such as a configuration management database (CMDB) or external inventory systems. It also includes an adapter API for interfacing with other management systems such as configuration management.

4.1.1. How It Works

Provisiond receives requests to add managed entities (nodes, IP interfaces, SNMP interfaces, services) via three basic mechanisms:

-

automatic discovery (typically via the Discovery daemon)

-

directed discovery using an import requisition (typically via the Provisioning UI)

-

asset import through the RestAPI or the provisioning integration server (PRIS)

OpenNMS Horizon enables you to control Provisiond behavior by creating provisioning policies that include scanning frequency, IP ranges, and which services to detect.

Regardless of the method, provisioning is an iterative process: you will need to fine-tune your results to exclude or add things to what you monitor.

4.1.2. Automatic Discovery

OpenNMS Horizon uses an ICMP ping sweep to find IP addresses on the network and provision node entities. Using auto discovery with detectors allows you to specify services that you need to detect in addition to the ICMP IP address ping. Import handlers allow you to further control provisioning.

Automatically discovered entities are analyzed, persisted to the relational data store, and managed based on the policies defined in the default foreign source definition:

-

scanned to discover node entity’s interfaces (SNMP and IP)

-

interfaces are persisted

-

service detection of each IP interface

-

node merging

| Merging occurs only when two automatically discovered nodes appear to be the same node. Nodes discovered directly are not included in the node merging process. |

4.1.3. Directed Discovery

Directed discovery allows you to specify what you want to provision based on an existing data source such as an in-house inventory, stand-alone provisioning system, or set of element management systems. Using an import requisition, this mechanism directs OpenNMS Horizon to add, update, or delete a node entity exactly as defined by the external source. No discovery process is used for finding more interfaces or services.

| An import requisition is an XML definition of node entities to be provisioned from an external source into OpenNMS Horizon. See the requisition schema (XSD) for more information. |

Understanding the Process

Directed disovery involves three phases:

-

import (with three sub-phases)

-

marshal

-

audit

-

limited SNMP scan

-

-

node scan

-

service scan

The import phase begins when Provisiond receives a request to import a requisition from a URL. The requisition is marshalled into Java objects for processing. An audit, based on the unique foreign ID of the foreign source, determines whether the node already exists; the imported object is then added, updated, or deleted from the inventory.

| If any syntactical or XML structural problems occur in the requisition, the entire import is abandoned and no import operations are completed. |

If a requisition node has an interface defined as the primary SNMP interface, then during the update and add operations the node is scanned for minimal SNMP attribute information.

The node scan phase discovers details about the node and interfaces that were not directly provisioned. All physical (SNMP) and logical (IP) interfaces are discovered and persisted based on any provisioning policies that may have been defined for the foreign source associated with the import requisition.

After interface discovery, Provisiond moves to service detection on each IP interface entity.

4.2. Getting Started

OpenNMS Horizon installs with a base configuration that automatically begins service-level monitoring and reporting as soon as you provision managed entities (nodes, IP interfaces, SNMP interfaces, and services).

OpenNMS Horizon has three methods of provisioining:

-

asset import through the RestAPI

Use auto discovery if you do not have a "source of truth" for your network inventory; auto discovery can become that source. Be aware that auto discovery can generate too much information, including entities you do not want to monitor.

Directed discovery is effective if you know your inventory, particularly with smaller networks (i.e., 100–200 nodes). It is also useful for areas of your network that you cannot auto discover.

See the how it works section of the introdution for more information on the provisioning process.

Regardless of the method, provisioning is an iterative process: you will need to fine-tune your results to exclude or add things to what you monitor.

4.2.1. Before You Begin

If you collect data via SNMP or are monitoring the availability of the SNMP service on a node, you must configure SNMP for provisioning before using auto or directed discovery. This ensures that OpenNMS Horizon can immediately scan newly discovered devices for entities. It also makes reporting and thresholding available for these devices.

In addition, you may want to edit the default foreign source definition to specify the services to detect and policies to apply during discovery.

4.2.2. Configuring SNMP for Provisioning

Proper SNMP configuration allows OpenNMS Horizon to understand network and node topology as well as to automatically enable performance data collection. OpenNMS Horizon updates network topology as it provisions nodes.

-

In the web UI, click the gear icon in the top right.

-

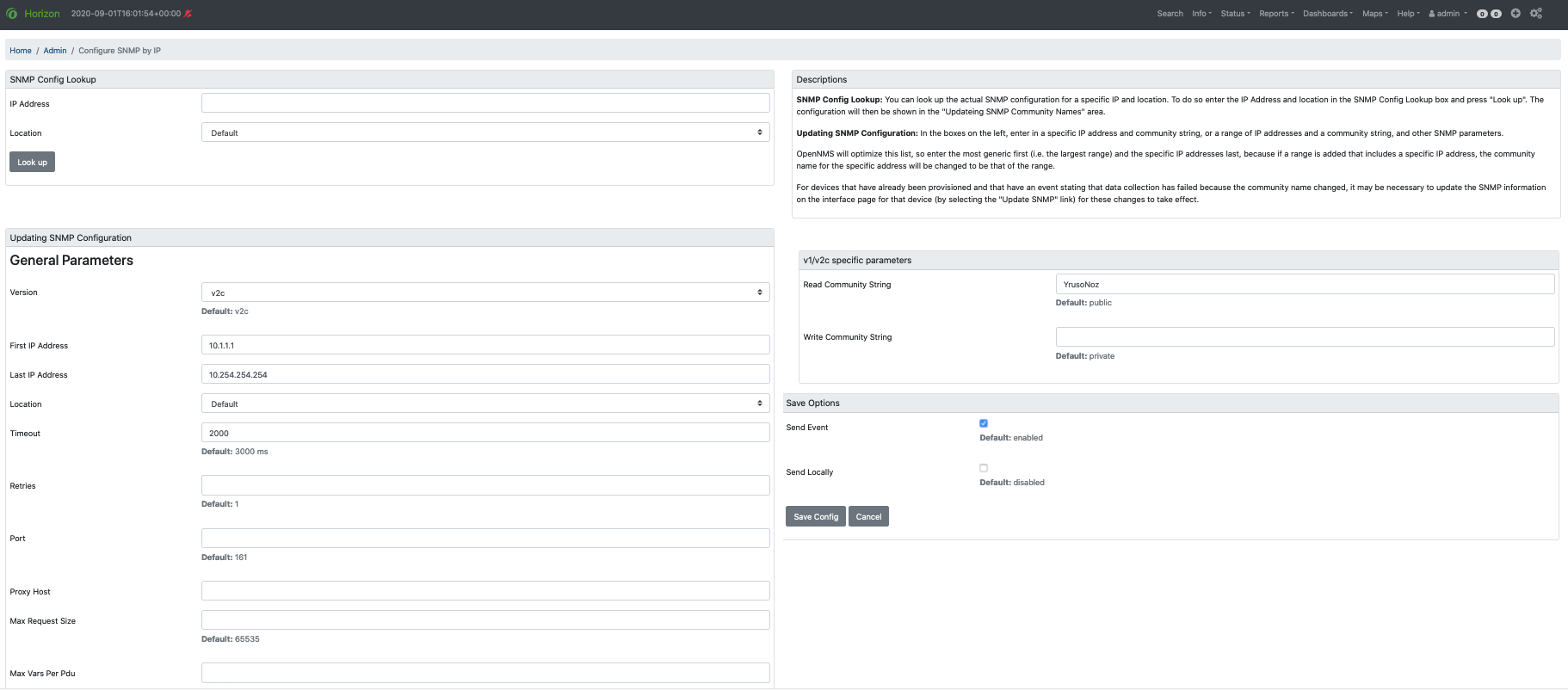

In the Provisioning area, choose Configure SNMP Community Names by IP Address, and fill in the fields as desired:

This screen sets up SNMP within OpenNMS Horizon for agents listening on IP addresses 10.1.1.1 through 10.254.254.254.

These settings are optimized into the snmp-configuration.xml file.

For an example of the resulting XML configuration, see Configuring SNMP community names.

4.2.3. Edit Default Foreign Source Definition

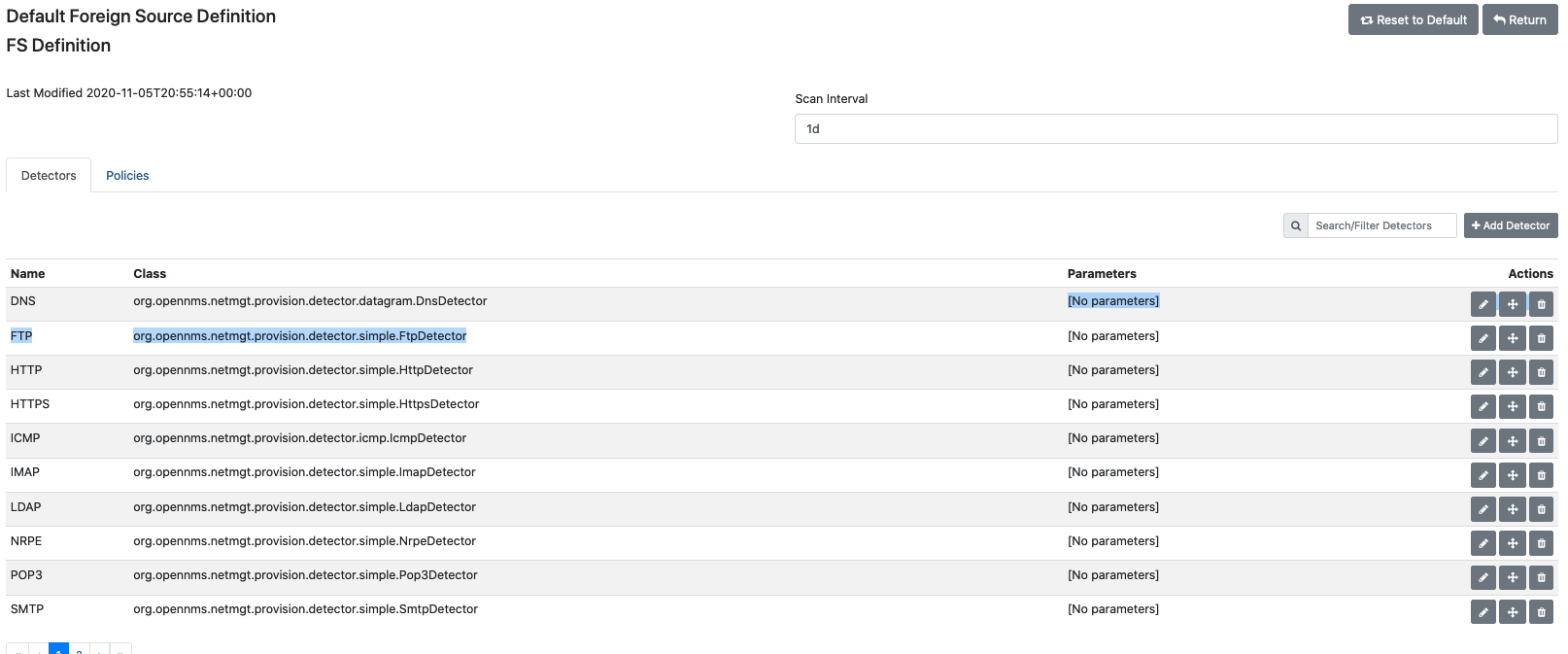

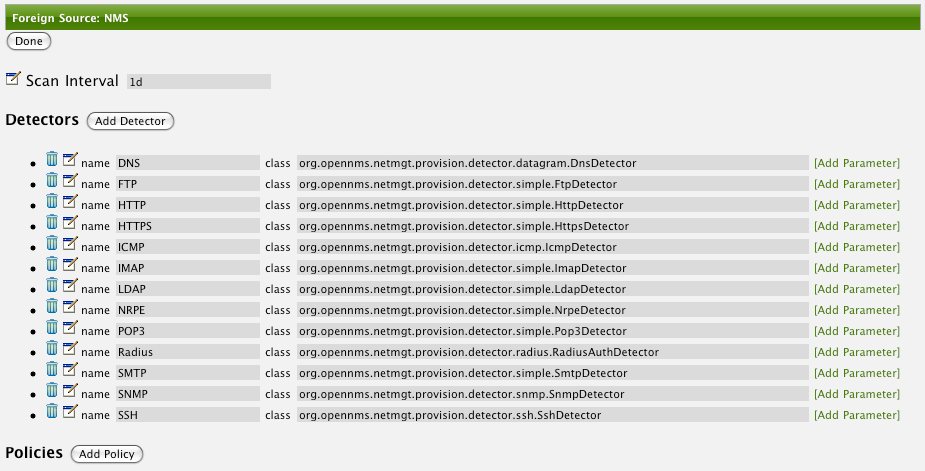

The default foreign source definition serves as a template that defines the services to detect on (DNS, FTP, ICMP, etc.), the scan interval for discovery, and the policies to use when provisioning.

Policies determine entity persistence and/or set attributes on the discovered entities that control OpenNMS Horizon management behavior. Provisiond applies the existing default foreign source definintion unless you choose to modify it.

-

In the web UI, click the gear icon in the top right.

-

In the Provisioning area, choose Manage Provisioning Requisitions.

-

Click Edit Default FS.

The screen displays the list of service detectors and a tab to view and define policies. Provisiond scans the services in the order in which the detectors appear in the list. -

Click the appropriate icon to edit, delete, or move a service detector.

-

You can also add parameters to a detector, including retries, timeout, port, etc.) by clicking the Edit icon and choosing Add Parameter.

-

-

Click Save.

-

If desired, update the scan interval using one of the following:

-

w: weeks

-

d: days

-

h: hours

-

m: minutes

-

s: seconds

-

ms: milliseconds

For example, to rescan every six days and 53 minutes, use

6d 53m. Specify0to disable automatic scanning. -

-

Click Save.

-

Click the Policies tab in the Default Foreign Source Definition screen.

-

Specify a name for the policy, select the class from the drop down, and fill out any information associated with that class.

-

Use the space bar to see the options for the fields.

-

-

(optional) Click Add Parameter to add additional paramters to the class, or Save.

-

Click Save.

-

Repeat for any additional policies you want to add.

-

Click Save at the top right to save the FS definition.

| To return to the default foreign source definition, click Reset to Default. |

4.2.4. Create a Requisition

A requisition is a set of nodes (networked devices) that you want to import into OpenNMS Horizon for monitoring and management. You can iteratively build a requisition and later actually import the nodes in the requisition into OpenNMS Horizon. Doing so processes all of the adds/changes/deletes at once.

| Organize nodes with a similar network monitoring profile into a requisition, so that you can assign the same services, detectors, and policies to model the network monitoring behavior (e.g., routers, switches). |

This procedure desribes how to create an empty requisition. Links to additional information on customizing a requisition appear at the end of the procedure.

-

In the web UI, click the gear icon in the top right.

-

In the Provisioning area, choose Manage Provisioning Requisitions.

-

If you haven’t already, edit the default foreign source definition to define services to detect.

-

Click Add Requisition, type a name, and click OK.

-

Click the edit icon beside the requisition you created.

-

(optional) Click Edit Definition to define the services, policies, and scan interval to use for this requisition.

-

Do this only if this requisition differs from the default foreign source definition already configured.

-

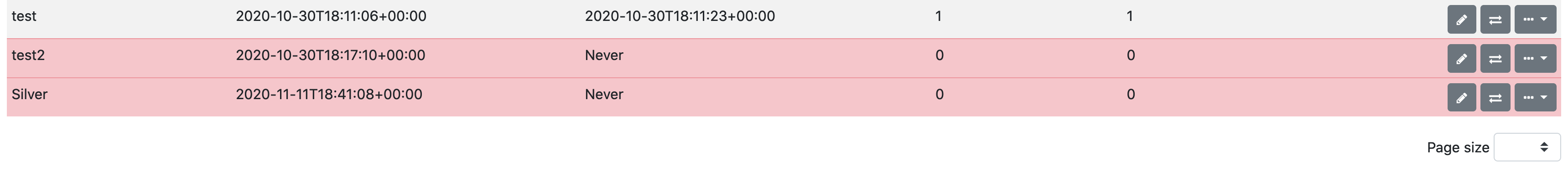

| The requisition remains red until you synchronize it with the database. |

Once created, you can

-

customize a requisition

4.3. Directed Discovery

Directed discovery is the process of manually adding nodes to OpenNMS Horizon through the requisition UI. Two other methods for manually adding nodes (quick add node and manually adding an interface) are in the process of being deprecated. We do not recommend using these features.

Make sure you complete the tasks in the Getting Started section before adding nodes.

4.3.1. Add Nodes through the Requisition UI

Before adding nodes to a requisition, you must create a requisition.

-

In the web UI, click the gear icon in the top right.

-

In the Provisioning area, choose Manage Provisioning Requisition.

-

Click the edit icon beside the requisition you want to add nodes to.

-

Click Add Node.

-

OpenNMS Horizon auto-generates the foreign ID used to identify this node.

-

-

Fill out information in each of the tabs and click Save.

-

basic information (node label, auto-generated foreign ID, location)

-

path outage (configure network path to limit notifications from nodes behind other nodes, see Path Outages)

-

interfaces (add interface IP addresses and services)

-

assets (pre-defined metadata types)

-

categories (label/tag for type of node, e.g., routers, production, switches)

-

meta-data (customized asset information)

-

-

Repeat for each node you want to add.

-

Click Return to view the list of nodes you have added.

-

Click Synchronize to provision them to the OpenNMS Horizon database.

4.4. Auto Discovery

Auto discovery is the process of automatically adding nodes to OpenNMS Horizon. You do this through discovery, either periodically on schedule or through single, unscheduled times.

Make sure you complete the tasks in the Getting Started section before adding nodes.

4.4.1. Configure Discovery

Configuring discovery specifies the parameters OpenNMS Horizon uses when scanning for nodes.

-

Click the gear icon and in the Provisioning area choose Configure Discovery.

To configure a discovery scan to run once, select Run Single Discovery Scan. -

In the General Settings area, accept the default scheduling options (sleeptime, retries,timeout, etc.), or set your own.

-

From the Foreign Source drop-down, select the requisition to which you want to add the discovered nodes.

-

If you have installed Minions, select one from the Location drop-down.

-

Click Add New to add the following:

-

specific address (IP addresses to add)

-

URLs

-

IP address ranges to include

-

IP address ranges to exclude

-

-

Click Save and Restart Discovery.

For single discovery scan, click Start Discovery Scan. -

When the discovery is finished, navigate to the requisition (Manage Provisioning Requisitions) you specified to view the nodes discovered.

-

If desired, edit the nodes or delete them from the requisition, then click Synchronize to add them to the OpenNMS Horizon database.

-

Repeat this process for each requisition you want to provision.

4.5. Integrating with Provisiond

Use the ReST API for integration from other provisioning systems with OpenNMS Horizon. The ReST API provides an interface for defining foreign sources and requisitions.

4.5.1. Provisioning Groups of Nodes

Just as with the web UI, groups of nodes can be managed via the ReST API from an external system. The steps are:

-

Update the default foreign source defintion (if not using the default) for the group

-

Update the SNMP configuration for each node in the group

-

Create/update the group of nodes

4.5.2. Example

Step 1 - Create a Foreign Source

To change the policies for this group of nodes you should create a foreign source for the group. You can do so using the ReST API:

The XML can be imbedded in the curl command option -d or be referenced from a file if the @ prefix is used with the file name as in this case.

|

The XML file: customer-a.foreign-source.xml:

<?xml version="1.0" encoding="UTF-8" standalone="yes"?>

<foreign-source date-stamp="2009-10-12T17:26:11.616-04:00" name="customer-a" xmlns="http://xmlns.opennms.org/xsd/config/foreign-source">

<scan-interval>1d</scan-interval>

<detectors>

<detector class="org.opennms.netmgt.provision.detector.icmp.IcmpDetector" name="ICMP"/>

<detector class="org.opennms.netmgt.provision.detector.snmp.SnmpDetector" name="SNMP"/>

</detectors>

<policies>

<policy class="org.opennms.netmgt.provision.persist.policies.MatchingIpInterfacePolicy" name="no-192-168">

<parameter value="UNMANAGE" key="action"/>

<parameter value="ALL_PARAMETERS" key="matchBehavior"/>

<parameter value="~^192\.168\..*" key="ipAddress"/>

</policy>

</policies>

</foreign-source>Here is an example curl command used to create the foreign source with the above foreign source specification above:

curl -v -u admin:admin -X POST -H 'Content-type: application/xml' -d '@customer-a.foreign-source.xml' http://localhost:8980/opennms/rest/foreignSourcesNow that you’ve created the foreign source, it needs to be deployed by Provisiond.

Here an the example using the curl command to deploy the foreign source:

curl -v -u admin:admin http://localhost:8980/opennms/rest/foreignSources/pending/customer-a/deploy -X PUT| The current API doesn’t strictly follow the ReST design guidelines and will be updated in a later release. |

Step 2 - Update the SNMP configuration

The implementation only supports a PUT request because it is an implied "Update" of the configuration since it requires an IP address and all IPs have a default configuration.

This request is is passed to the SNMP configuration factory in OpenNMS Horizon for optimization of the configuration store snmp-config.xml.

This example changes the community string for the IP address 10.1.1.1 to yRuSonoZ.

| Community string is the only required element |

curl -v -X PUT -H "Content-Type: application/xml" -H "Accept: application/xml" -d <snmp-info><community>yRuSonoZ</community><port>161</port><retries>1</retries><timeout>2000</timeout><version>v2c</version></snmp-info>" -u admin:admin http://localhost:8980/opennms/rest/snmpConfig/10.1.1.1Step 3 - Create/Update the Requisition

This example adds 2 nodes to the Provisioning Group, customer-a. Note that the foreign-source attribute typically has a 1 to 1 relationship to the name of the Provisioning Group requisition. There is a direct relationship between the foreign- source attribute in the requisition and the foreign source policy specification. Also, typically, the name of the provisioning group will also be the same. In the following example, the ReST API will automatically create a provisioning group based on the value foreign-source attribute specified in the XML requisition.

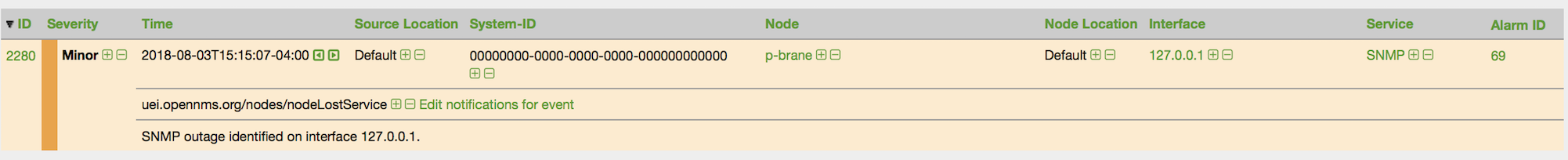

curl -X POST -H "Content-Type: application/xml" -d "<?xml version="1.0" encoding="UTF-8"?><model-import xmlns="http://xmlns.opennms.org/xsd/config/model-import" date-stamp="2009-03-07T17:56:53.123-05:00" last-import="2009-03-07T17:56:53.117-05:00" foreign-source="customer-a"><node node-label="p-brane" foreign-id="1" ><interface ip-addr="10.0.1.3" descr="en1" status="1" snmp-primary="P"><monitored-service service-name="ICMP"/><monitored-service service-name="SNMP"/></interface><category name="Production"/><category name="Routers"/></node><node node-label="m-brane" foreign-id="1" ><interface ip-addr="10.0.1.4" descr="en1" status="1" snmp-primary="P"><monitored-service service-name="ICMP"/><monitored-service service-name="SNMP"/></interface><category name="Production"/><category name="Routers"/></node></model-import>" -u admin:admin http://localhost:8980/opennms/rest/requisitionsA provisioning group file called etc/imports/customer-a.xml will be found on the OpenNMS Horizon system following the successful completion of this curl command and will also be visible via the WebUI.

| Add, Update, Delete operations are handled via the ReST API in the same manner as described in detailed specification. |

4.6. Import Handlers

The new Provisioning service in OpenNMS Horizon is continuously improving and adapting to the needs of the community.

One of the most recent enhancements to the system is built upon the very flexible and extensible API of referencing an import requisition’s location via a URL.

Most commonly, these URLs are files on the file system (i.e. file:/opt/opennms/etc/imports/<my-provisioning-group.xml>) as requisitions created by the Provisioning Groups UI.

However, these same requisitions for adding, updating, and deleting nodes (based on the original model importer) can also come from URLs.

For example a requisition can be retrieving the using HTTP protocol: http://myinventory.server.org/nodes.cgi

In addition to the standard protocols supported by Java, we provide a series of custom URL handlers to help retrieve requisitions from external sources.

4.6.1. Generic Handler

The generic handler is made available using URLs of the form: requisition://type?param=1;param=2

Using these URLs various type handlers can be invoked, both locally and via a Minion.

In addition to the type specific parameters, the following parameters are supported:

| Parameter | Description | Required | Default value |

|---|---|---|---|

|

The name of location at which the handler should be run |

optional |

Default |

|

The maximum number of miliseconds to wait for the handler when ran remotely |

optional |

20000 |

See the relevant sections bellow for additional details on the support types.

The opennms:show-import command available via the Karaf Shell can be used to show the results of an import (without persisting or triggering the import):

opennms:show-import -l MINION http url=http://127.0.0.1:8000/req.xml4.6.2. File Handler

Examples:

file:///path/to/my/requisition.xml

requisition://file?path=/path/to/my/requisition.xml;location=MINION

4.6.3. HTTP Handler

Examples:

http://myinventory.server.org/nodes.cgi

requisition://http?url=http%3A%2F%2Fmyinventory.server.org%2Fnodes.cgi

| When using the generic handler, the URL should be "URL encoded". |

4.6.4. DNS Handler

The DNS handler requests a Zone Transfer (AXFR) request from a DNS server. The A records are recorded and used to build an import requisition. This is handy for organizations that use DNS (possibly coupled with an IP management tool) as the data base of record for nodes in the network. So, rather than ping sweeping the network or entering the nodes manually into OpenNMS Horizon Provisioning UI, nodes can be managed via 1 or more DNS servers.

The format of the URL for this new protocol handler is: dns://<host>[:port]/<zone>[/<foreign-source>/][?expression=<regex>]

DNS Import Examples:

dns://my-dns-server/myzone.com

This URL will import all A records from the host my-dns-server on port 53 (default port) from zone "myzone.com" and since the foreign source (a.k.a. the provisioning group) is not specified it will default to the specified zone.

dns://my-dns-server/myzone.com/portland/?expression=^por-.*

This URL will import all nodes from the same server and zone but will only manage the nodes in the zone matching the regular expression ^port-.* and will and they will be assigned a unique foreign source (provisioning group) for managing these nodes as a subset of nodes from within the specified zone.

If your expression requires URL encoding (for example you need to use a ? in the expression) it must be properly encoded.

dns://my-dns-server/myzone.com/portland/?expression=^por[0-9]%3F

Currently, the DNS server requires to be setup to allow a zone transfer from the OpenNMS Horizon server. It is recommended that a secondary DNS server is running on OpenNMS Horizon and that the OpenNMS Horizon server be allowed to request a zone transfer. A quick way to test if zone transfers are working is:

dig -t AXFR @<dnsServer> <zone>

The configuration of the Provisoning system has moved from a properties file (model-importer.properties) to an XML based configuration container.

The configuration is now extensible to allow the definition of 0 or more import requisitions each with their own cron based schedule for automatic importing from various sources (intended for integration with external URL such as http and this new dns protocol handler.

A default configuration is provided in the OpenNMS Horizon etc/ directory and is called: provisiond-configuration.xml.

This default configuration has an example for scheduling an import from a DNS server running on the localhost requesting nodes from the zone, localhost and will be imported once per day at the stroke of midnight.

Not very practical but is a good example.

<?xml version="1.0" encoding="UTF-8"?>

<provisiond-configuration xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://xmlns.opennms.org/xsd/config/provisiond-configuration"

foreign-source-dir="/opt/opennms/etc/foreign-sources"

requistion-dir="/opt/opennms/etc/imports"

importThreads="8"

scanThreads="10"

rescanThreads="10"

writeThreads="8" >

<!--http://www.quartz-scheduler.org/documentation/quartz-1.x/tutorials/crontrigger

Field Name Allowed Values Allowed Special Characters

Seconds 0-59 , - * / Minutes 0-59 , - * / Hours 0-23 , - * /

Day-of-month1-31, - * ? / L W C Month1-12 or JAN-DEC, - * /

Day-of-Week1-7 or SUN-SAT, - * ? / L C # Year (Opt)empty, 1970-2099, - * /

-->

<requisition-def import-name="localhost"

import-url-resource="dns://localhost/localhost">

<cron-schedule>0 0 0 * * ? *</cron-schedule> <!-- daily, at midnight -->

</requisition-def>

</provisiond-configuration>Like many of the daemon configuration in the 1.7 branch, the configurations are reloadable without having to restart OpenNMS Horizon, using the reloadDaemonConfig uei:

/opt/opennms/bin/send-event.pl uei.opennms.org/internal/reloadDaemonConfig --parm 'daemonName Provisiond'

This means that you don’t have to restart OpenNMS Horizon every time you update the configuration.

4.7. Provisioning Examples

Here are a few practical examples of enhanced directed discovery to help with your understanding of this feature.

4.7.1. Basic Provisioning

This example adds three nodes and requires no OpenNMS Horizon configuration other than specifying the node entities to be provisioned and managed in OpenNMS Horizon.

Defining the Nodes via the Web-UI

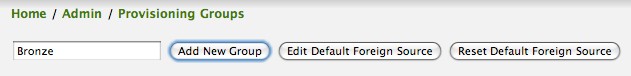

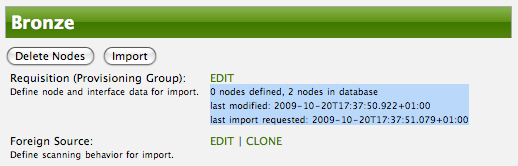

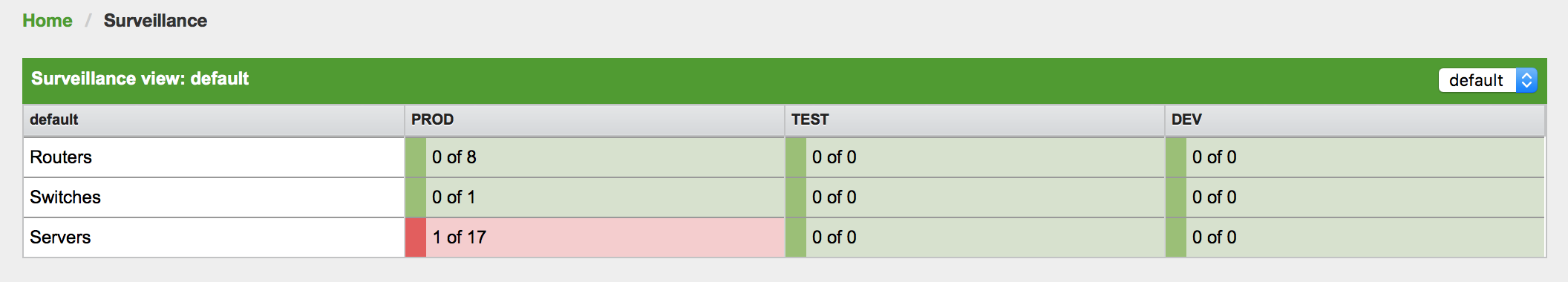

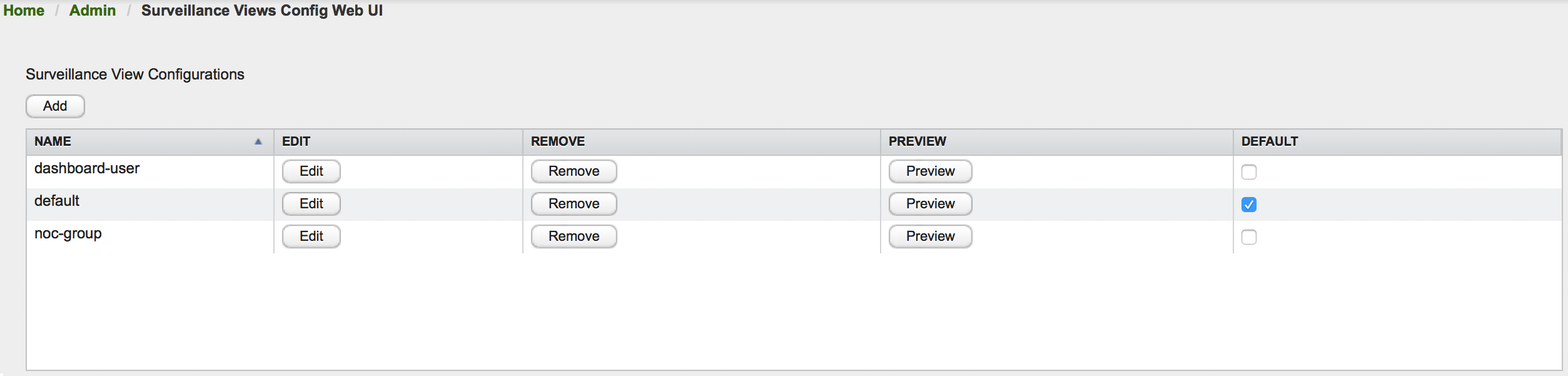

Using the Provisioning Groups Web-UI, three nodes are created given a single IP address. Navigate to the Admin Menu and click Provisioning Groups Menu from the list of Admin options and create the group Bronze.

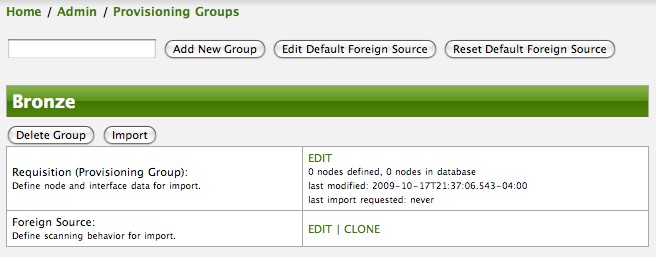

Clicking the Add New Group button will create the group and will redisplay the page including this new group among the list of any group(s) that have already been created.

| At this point, the XML structure for holding the new provisioning group (a.k.a. an import requisition) has been persisted to the '$OPENNMS_ETC/imports/pending' directory. |

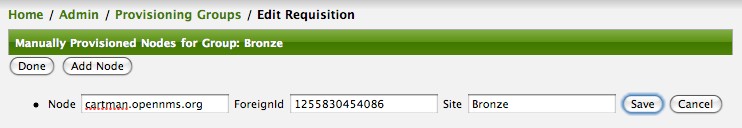

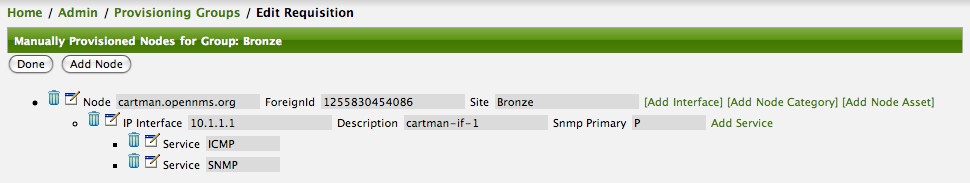

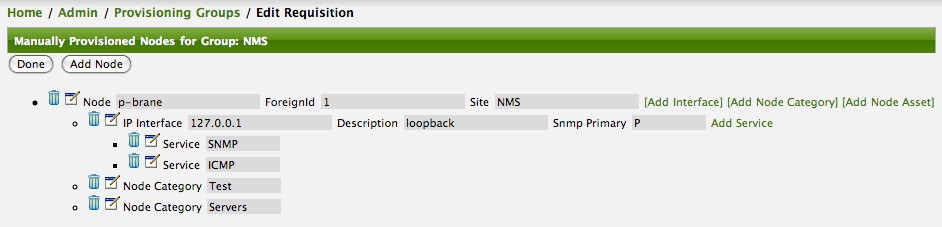

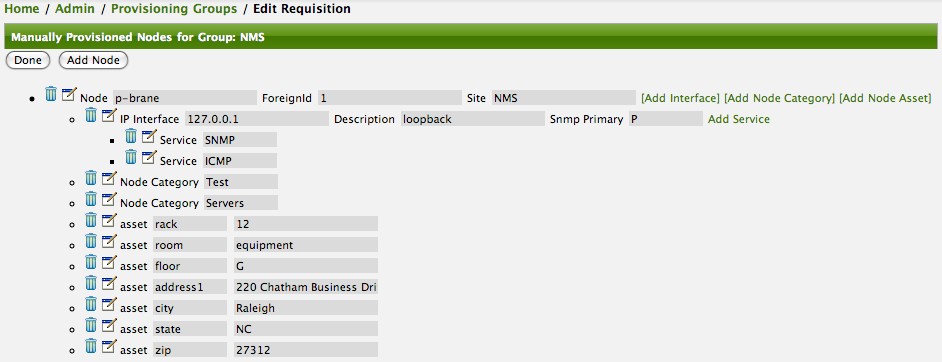

Clicking the Edit link will bring you to the screen where you can begin the process of defining node entities that will be imported into OpenNMS Horizon. Click the Add Node button will begin the node entity creation process fill in the node label and click the Save button.

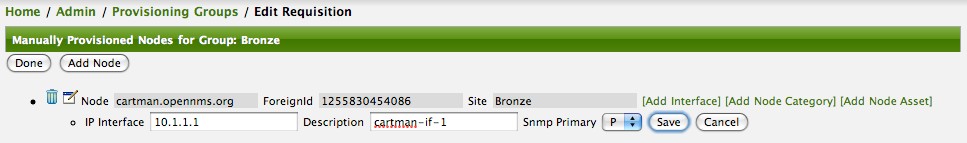

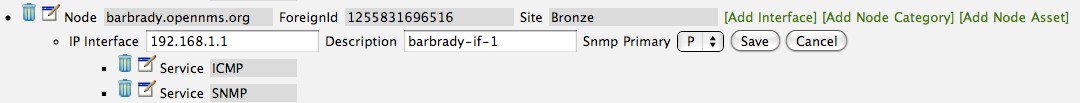

At this point, the provisioning group contains the basic structure of a node entity but it is not complete until the interface(s) and interface service(s) have been defined. After having clicked the Save button, as we did above presents, in the Web-UI, the options Add Interface, Add Node Category, and Add Node Asset. Click the Add Interface link to add an interface entity to the node.

Enter the IP address for this interface entity, a description, and specify the Primary attribute as P (Primary), S (Secondary), N (Not collected), or C (Collected) and click the save button.

Now the node entity has an interface for which services can be defined for which the Web-UI now presents the Add Service link.

Add two services (ICMP, SNMP) via this link.

Now the node entity definition contains all the required elements necessary for importing this requisition into OpenNMS Horizon. At this point, all the interfaces that are required for the node should be added. For example, NAT interfaces should be specified there are services that they provide because they will not be discovered during the Scan Phase.

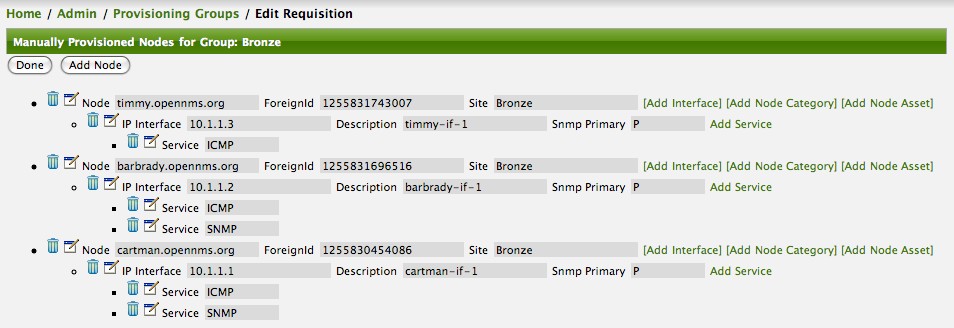

Two more node definitions will be added for the benefit of this example.

This set of nodes represents an import requisition for the Bronze provisioning group.

As this requisition is being edited via the WebUI, changes are being persisted into the OpenNMS Horizon configuration directory '$OPENNMS_etc/imports/' pending as an XML file having the name bronze.xml.

| The name of the XML file containing the import requisition is the same as the provisioning group name. Therefore naming your provisioning group without the use of spaces makes them easier to manage on the file system. |

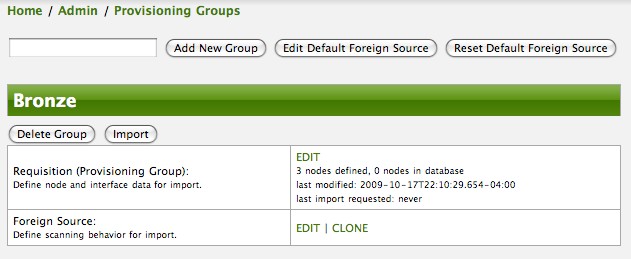

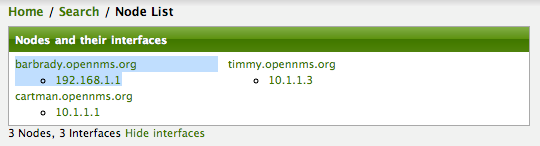

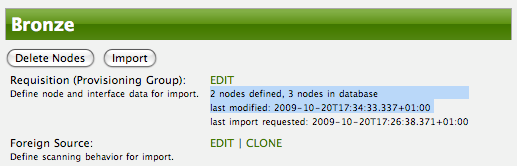

Click the Done button to return to the Provisioning Groups list screen. The details of the “Bronze” group now indicates that there are 3 nodes in the requisition and that there are no nodes in the DB from this group (a.k.a. foreign source). Additionally, you can see that time the requisition was last modified and the time it last imported are given (the time stamps are stored as attributes inside the requisition and are not the file system time stamps). These details are indicative of how well the DB represents what is in the requisition.

| You can tell that this is a pending requisition for 2 reasons: 1) there are 3 nodes defined and 0 nodes in the DB, 2) the requisition has been modified since the last import (in this case never). |

Import the Nodes

In this example, you see that there are 3 nodes in the pending requisition and 0 in the DB. Click the Import button to submit the requisition to the provisioning system (what actually happens is that the Web-UI sends an event to the Provisioner telling it to begin the Import Phase for this group).

| Do not refresh this page to check the values of these details. To refresh the details to verify the import, click the Provisioning Groups bread crumb item. |

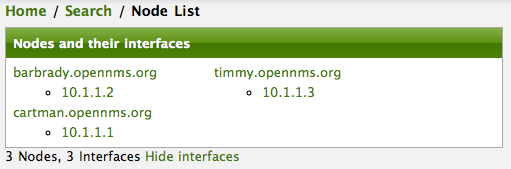

You should be able to immediately verify the importation of this provisioning group because the import happens very quickly. Provisiond has several threads ready for processing the import operations of the nodes defined in this requisition.

A few SNMP packets are sent and received to get the SNMP details of the node and the interfaces defined in the requisition. Upon receipt of these packets (or not) each node is inserted as a DB transaction.

Following the import of a node with thousands of interfaces, you will be able to refresh the Interface table browser on the Node page and see that interfaces and services are being discovered and added in the background. This is the discovery component of directed discovery.

To direct that another node be added from a foreign source (in this example the Bronze Provisioning Group) simply add a new node definition and re-import. It is important to remember that all the node definitions will be re-imported and the existing managed nodes will be updated, if necessary.

Changing a Node

To direct changes to an existing node, simply add, change, or delete elements or attributes of the node definition and re- import. This is a great feature of having directed specific elements of a node in the requisition because that attributes will simply be changed. For example, to change the IP address of the Primary SNMP interface for the node, barbrady.opennms.org, just change the requisition and re-import.

Each element in the Web-UI has an associated Edit icon Click this icon to change the IP address for barbrady.opennms.org, click save, and then Click the Done button.

The Web-UI will return you to the Provisioning Groups screen where you will see that there are the time stamp showing that the requisition’s last modification is more recent that the last import time.

This provides an indication that the group must be re-imported for the changes made to the requisition to take effect. The IP Interface will be simply updated and all the required events (messages) will be sent to communicate this change within OpenNMS Horizon.

Deleting a Node

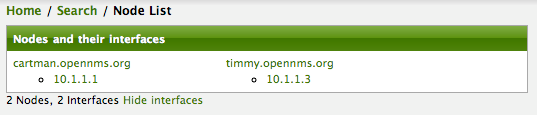

Barbrady has not been behaving, as one might expect, so it is time to remove him from the system. Edit the provisioning group, click the delete button next to the node barbrady.opennms.org, click the Done button.

Click the Import button for the Bronze group and the Barbrady node and its interfaces, services, and any other related data will be immediately deleted from the OpenNMS Horizon system. All the required Events (messages) will be sent by Provisiond to provide indication to the OpenNMS Horizon system that the node Barbrady has been deleted.

Deleting all the Nodes

There is a convenient way to delete all the nodes that have been provided from a specific foreign source.

From the main Admin/Provisioning Groups screen in the Web-UI, click the Delete Nodes button.

This button deletes all the nodes defined in the Bronze requisition.

It is very important to note that once this is done, it cannot be undone!

Well it can’t be undone from the Web-UI and can only be undone if you’ve been good about keeping a backup copy of your '$OPENMS_ETC/' directory tree.

If you’ve made a mistake, before you re-import the requisition, restore the Bronze.xml requisition from your backup copy to the '$OPENNMS_ETC/imports' directory.

Clicking the Import button will cause the Audit Phase of Provisiond to determine that all the nodes from the Bronze group (foreign source) should be deleted from the DB and will create Delete operations. At this point, if you are satisfied that the nodes have been deleted and that you will no longer require nodes to be defined in this Group, you will see that the Delete Nodes button has now changed to the Delete Group button. The Delete Group button is displayed when there are no nodes entities from that group (foreign source) in OpenNMS Horizon.

When no node entities from the group exist in OpenNMS Horizon, then the Delete Group button is displayed.

4.7.2. Advanced Provisioning Example

In the previous example, we provisioned 3 nodes and let Provisiond complete all of its import phases using a default foreign source definition. Each Provisioning Group can have a separate foreign source definition that controls:

-

The rescan interval

-

The services to be detected

-

The policies to be applied

This example will demonstrate how to create a foreign source definition and how it is used to control the behavior of Provisiond when importing a Provisioning Group/foreign source requisition.

First let’s simply provision the node and let the default foreign source definition apply.

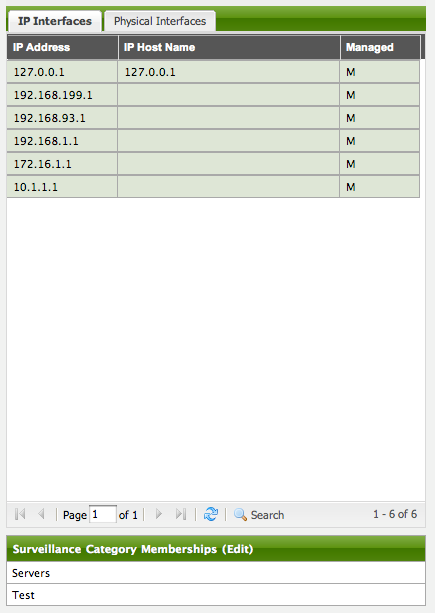

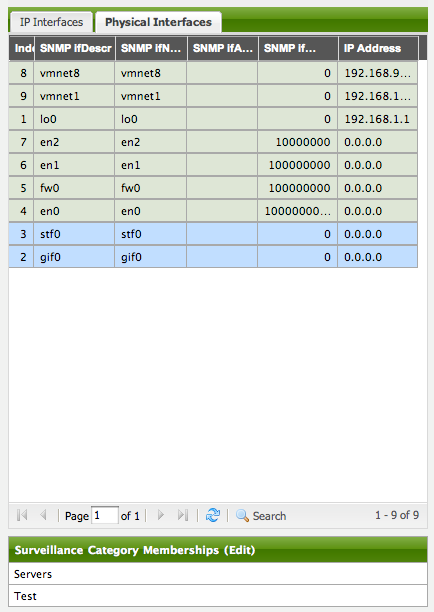

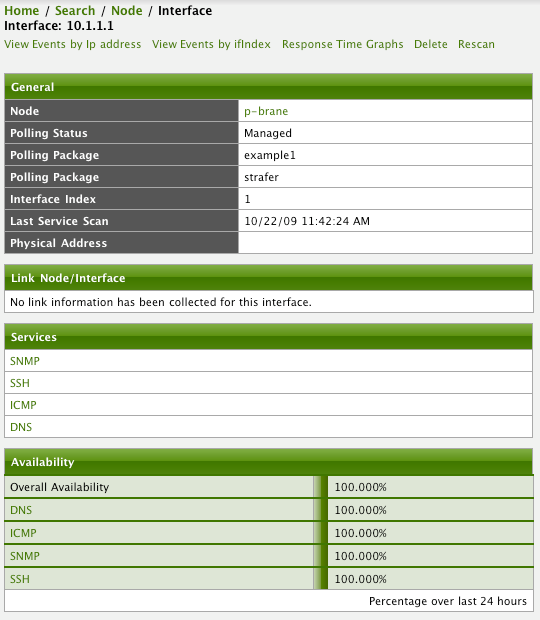

Following the import, All the IP and SNMP interfaces, in addition to the interface specified in the requisition, have been discovered and added to the node entity. The default foreign source definition has no polices for controlling which interfaces that are discovered either get persisted or managed by OpenNMS Horizon.

Service Detection

As IP interfaces are found during the node scan process, service detection tasks are scheduled for each IP interface. The service detections defined in the foreign source determines which services are to be detected and how (i.e. the values of the parameters that parameters control how the service is detected, port, timeout, etc.).

Applying a New Foreign Source Definition

This example node has been provisioned using the Default foreign source definition. By navigating to the Provisioning Groups screen in the OpenNMS Horizon Web-UI and clicking the Edit Foreign Source link of a group, you can create a new foreign source definition that defines service detection and policies. The policies determine entity persistence and/or set attributes on the discovered entities that control OpenNMS Horizon management behaviors.

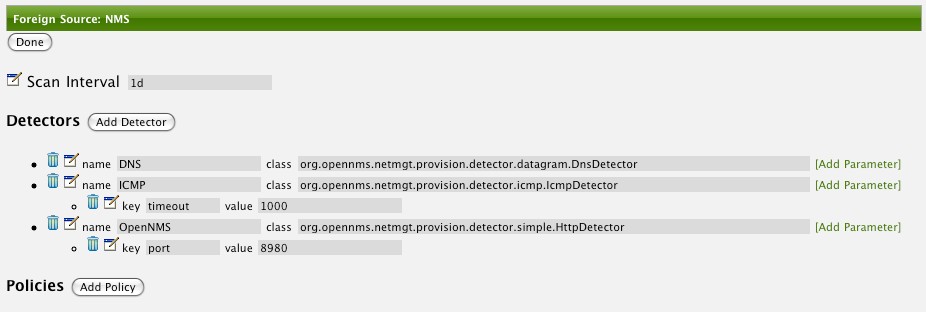

In this UI, new Detectors can be added, changed, and removed. For this example, we will remove detection of all services accept ICMP and DNS, change the timeout of ICMP detection, and a new Service detection for OpenNMS Horizon Web-UI.

Click the Done button and re-import the NMS Provisioning Group. During this and any subsequent re-imports or re- scans, the OpenNMS Horizon detector will be active, and the detectors that have been removed will no longer test for the related services for the interfaces on nodes managed in the provisioning group (requisition), however, the currently detected services will not be removed. There are 2 ways to delete the previously detected services:

-

Delete the node in the provisioning group, re-import, define it again, and finally re-import again

-

Use the ReST API to delete unwanted services. Use this command to remove each unwanted service from each interface, iteratively:

curl -X DELETE -H "Content-Type: application/xml" -u admin:admin http://localhost:8980/opennms/rest/nodes/6/ipinterfaces/172.16.1.1/services/DNS

| There is a sneaky way to do #1. Edit the provisioning group and just change the foreign ID. That will make Provisiond think that a node was deleted and a new node was added in the same requisition! Use this hint with caution and an full understanding of the impact of deleting an existing node. |

Provisioning with Policies

The Policy API in Provisiond allow you to control the persistence of discovered IP and SNMP Interface entities and Node Categories during the Scan phase.

The Matching IP Interface policy controls whether discovered interfaces are to be persisted and if they are to be persisted, whether or not they will be forced to be Managed or Unmanaged.

Continuing with this example Provisioning Group, we are going to define a few policies that:

-

Prevent discovered 10 network addresses from being persisted

-

Force 192.168 network addresses to be unmanaged

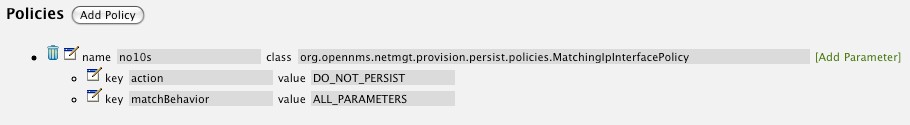

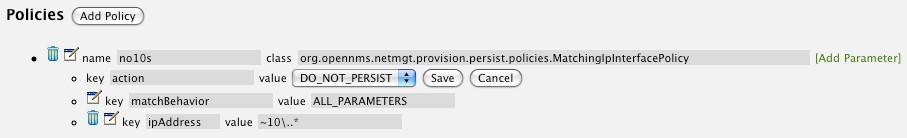

From the foreign source definition screen, click the Add Policy button and the definition of a new policy will begin with a field for naming the policy and a drop down list of the currently installed policies. Name the policy no10s, make sure that the Match IP Interface policy is specified in the class list and click the Save button. This action will automatically add all the parameters required for the policy.

The two required parameters for this policy are action and matchBehavior.

The DO_NOT_PERSIST action does just what it indicates, it prevents discovered IP interface entities from being added to OpenNMS Horizon when the matchBehavior is satisfied. The Manage and UnManage values for this action allow the IP interface entity to be persisted by control whether or not that interface should be managed by OpenNMS Horizon.

The matchBehavior action is a boolean control that determines how the optional parameters will be evaluated. Setting this parameter’s value to ALL_PARAMETERS causes Provisiond to evaluate each optional parameter with boolean AND logic and the value ANY_PARAMETERS will cause OR logic to be applied.

Now we will add one of the optional parameters to filter the 10 network addresses.

The Matching IP Interface policy supports two additional parameters, hostName and ipAddress.

Click the Add Parameter link and choose ipAddress as the key.

The value for either of the optional parameters can be an exact or regular expression match.

As in most configurations in OpenNMS Horizon where regular expression matching can be optionally applied, prefix the value with the ~ character.

Any subsequent scan of the node or re-imports of NMS provisioning group will force this policy to be applied. IP Interface entities that already exist that match this policy will not be deleted. Existing interfaces can be deleted by recreating the node in the Provisioning Groups screen (simply change the foreign ID and re-import the group) or by using the ReST API:

curl -X DELETE -H "Content-Type: application/xml" -u admin:admin http://localhost:8980/opennms/rest/nodes/6/ipinterfaces/10.1.1.1

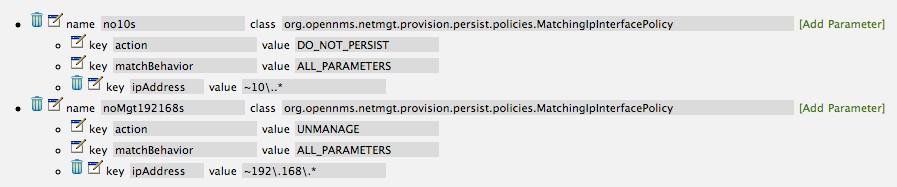

The next step in this example is to define a policy that sets discovered 192.168 network addresses to be unmanaged (not managed) in OpenNMS Horizon. Again, click the Add Policy button and let’s call this policy noMgt192168s. Again, choose the Mach IP Interface policy and this time set the action to UNMANAGE.

| The UNMANAGE behavior will be applied to existing interfaces. |

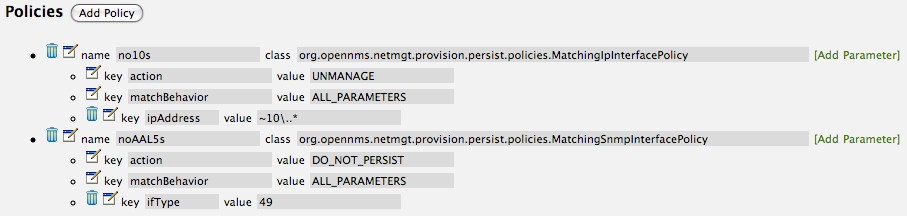

Like the Matching IP Interface Policy, this policy controls the whether discovered SNMP interface entities are to be persisted and whether or not OpenNMS Horizon should collect performance metrics from the SNMP agent for Interface’s index (MIB2 IfIndex).

In this example, we are going to create a policy that doesn’t persist interfaces that are AAL5 over ATM or type 49 (ifType). Following the same steps as when creating an IP Management Policy, edit the foreign source definition and create a new policy. Let’s call it: noAAL5s. We’ll use Match SNMP Interface class for each policy and add a parameter with ifType as the key and 49 as the value.

| At the appropriate time during the scanning phase, Provisiond will evaluate the policies in the foreign source definition and take appropriate action. If during the policy evaluation process any policy matches for a “DO_NOT_PERSIST” action, no further policy evaluations will happen for that particular entity (IP Interface, SNMP Interface). |

Another use of this policy is to mark interfaces for polling by the SNMP Interface Poller. The SNMP Interface Poller is a separate daemon that is disabled by default. In order for this daemon to do any work, some SNMP interfaces need to be selected for polling. Use the "ENABLE_POLLING" and "DISABLE_POLLING" actions available in this policy in order to manage which SNMP interfaces are polled by this daemon. Let’s create another policy named pollVoIPDialPeers that marks interfaces with ifType 104 for polling. We’ll set the action to ENABLE_POLLING and matchBehavior to ALL_PARAMETERS. Add a parameter for ifType as the key and 104 as the value.

If you later decide to move all your meetings to Minecraft and Mumble and therefore have no use for voice circuits, you will want to stop polling these interfaces. To do so, change the action to DISABLE_POLLING.

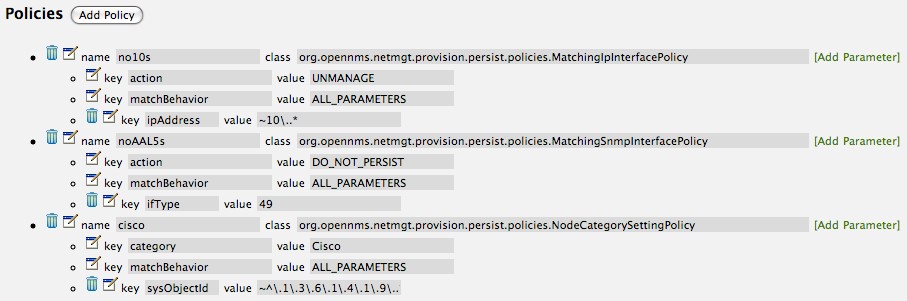

With this policy, nodes entities will automatically be assigned categories.

The policy is defined in the same manner as the IP and SNMP interface polices.

Click the Add Policy button and give the policy name, cisco and choose the Set Node Category class.

Edit the required category key and set the value to Cisco.

Add a policy parameter and choose the sysObjectId key with a value ~^\.1\.3\.6\.1\.4\.1\.9\..*.

This policy allows to use Groovy scripts to modify provisioned node data.

These scripts have to be placed in the OpenNMS Horizon etc/script-policies directory.

An example would be the change of the node’s primary interface or location.

The script will be invoked for each matching node.

The following example shows the source code for setting the 192.168.100.0/24 interface to PRIMARY while all remaining interfaces are set to SECONDARY.

Furthermore the node’s location is set to Minneapolis.

import org.opennms.netmgt.model.OnmsIpInterface;

import org.opennms.netmgt.model.monitoringLocations.OnmsMonitoringLocation;

import org.opennms.netmgt.model.PrimaryType;

for(OnmsIpInterface iface : node.getIpInterfaces()) {

if (iface.getIpAddressAsString().matches("^192\\.168\\.100\\..*")) {

LOG.warn(iface.getIpAddressAsString() + " set to PRIMARY")

iface.setIsSnmpPrimary(PrimaryType.PRIMARY)

} else {

LOG.warn(iface.getIpAddressAsString() + " set to SECONDARY")

iface.setIsSnmpPrimary(PrimaryType.SECONDARY)

}

}

node.setLocation(new OnmsMonitoringLocation("Minneapolis", ""));

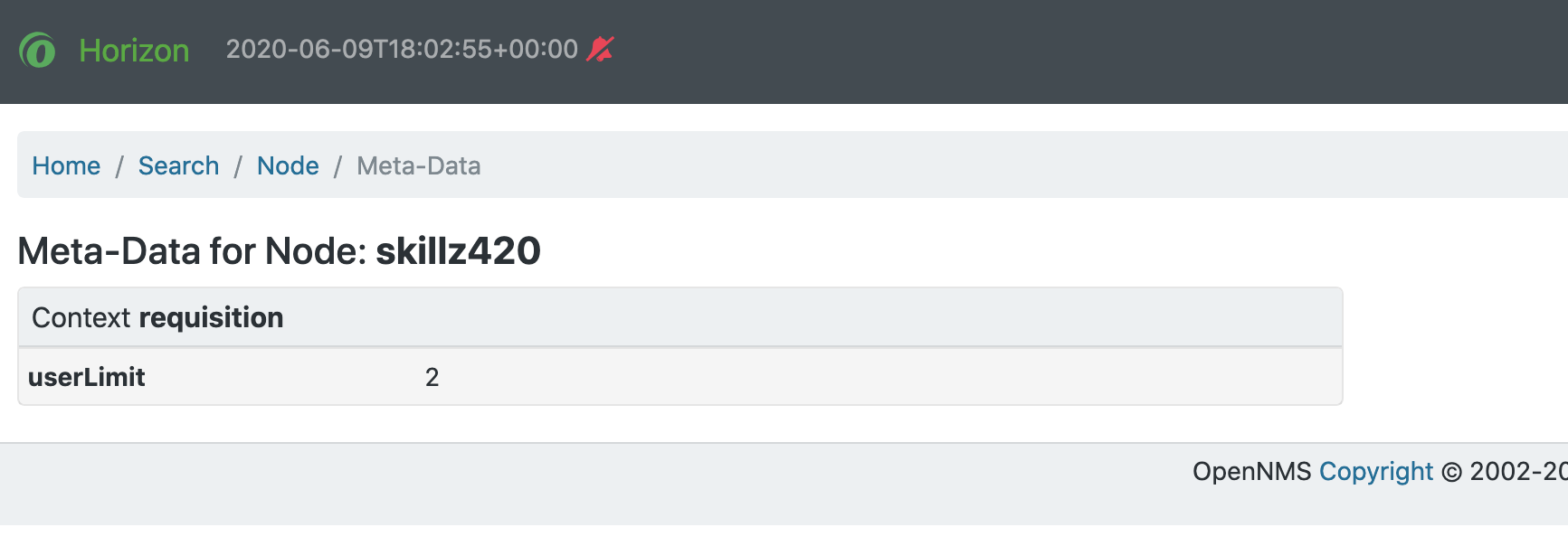

return node;The Metadata Policy allows you to set node-level metadata in the context requisition for provisioned nodes.

It uses the same matching mechanism as the Node Categorization Policy.

The Metadata Policy allows you to set interface-level metadata in the context requisition for provisioned nodes.

It uses the same matching mechanism as the Matching IP Interface Policy.

New Import Capabilities

Several new XML entities have been added to the import requisition since the introduction of the OpenNMS Importer service in version 1.6. So, in addition to provisioning the basic node, interface, service, and node categories, you can now also provision asset data.

Provisiond Configuration

The configuration of the Provisioning system has moved from a properties file (model-importer.properties) to an XML based configuration container.

The configuration is now extensible to allow the definition of 0 or more import requisitions each with their own Cron based schedule for automatic importing from various sources (intended for integration with external URL such as HTTP and this new DNS protocol handler.

A default configuration is provided in the OpenNMS Horizon etc/ directory and is called: provisiond-configuration.xml.

This default configuration has an example for scheduling an import from a DNS server running on the localhost requesting nodes from the zone, localhost and will be imported once per day at the stroke of midnight. Not very practical but is a good example.

<?xml version="1.0" encoding="UTF-8"?>

<provisiond-configuration xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://xmlns.opennms.org/xsd/config/provisiond-configuration"

foreign-source-dir="/opt/opennms/etc/foreign-sources"

requistion-dir="/opt/opennms/etc/imports"

importThreads="8"

scanThreads="10"

rescanThreads="10"

writeThreads="8" >

<!--

http://www.quartz-scheduler.org/documentation/quartz-1.x/tutorials/crontrigger[http://www.quartz-scheduler.org/documentation/quartz-1.x/tutorials/crontrigger]

Field Name Allowed Values Allowed Special Characters

Seconds 0-59 , - * / Minutes 0-59 , - * / Hours 0-23 , - * /

Day-of-month1-31, - * ? / L W C Month1-12 or JAN-DEC, - * /

Day-of-Week1-7 or SUN-SAT, - * ? / L C # Year (Opt)empty, 1970-2099, - * /

-->

<requisition-def import-name="NMS"

import-url-resource="file://opt/opennms/etc/imports/NMS.xml">

<cron-schedule>0 0 0 * * ? *</cron-schedule> <!-- daily, at midnight -->

</requisition-def>

</provisiond-configuration>Like many of the daemon configurations in the 1.7 branch, Provisiond’s configuration is re-loadable without having to restart OpenNMS. Use the reloadDaemonConfig uei:

/opt/opennms/bin/send-event.pl uei.opennms.org/internal/reloadDaemonConfig --parm 'daemonName Provisiond'

This means that you don’t have to restart OpenNMS Horizon every time you update the configuration!

Provisioning Asset Data

The Provisioning Groups Web-UI had been updated to expose the ability to add Node Asset data in an import requisition. Click the Add Node Asset link and you can select from a drop down list all the possible node asset attributes that can be defined.

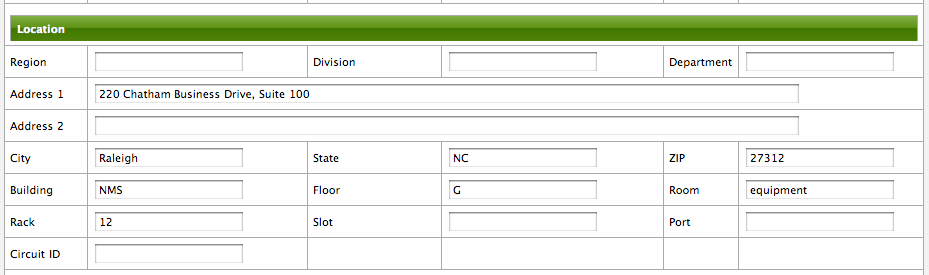

After an import, you can navigate to the Node Page and click the Asset Info link and see the asset data that was just provided in the requisition.

External Requisition Sources

Because Provisiond takes a URL as the location service for import requisitions, OpenNMS Horizon can be easily extended to support sources in addition to the native URL handling provided by Java: file://, http://, and https://. When you configure Provisiond to import requisitions on a schedule you specify using a URL Resource. For requisitions created by the Provisioning Groups WebUI, you can specify a file based URL.

| <need further documentation> |

Provisioning Nodes from DNS

The new Provisioning service in OpenNMS Horizon is continuously improving and adapting to the needs of the community.

One of the most recent enhancements to the system is built upon the very flexible and extensible API of referencing an import requisition’s location via a URL.

Most commmonly, these URLs are files on the file system (i.e. file:/opt/opennms/etc/imports/<my-provisioning-group.xml>) as requisitions created by the Provisioning Groups UI. However, these same requistions for adding, updating, and deleting nodes (based on the original model importer) can also come from URLs specifying the HTTP protocol: http://myinventory.server.org/nodes.cgi)

Now, using Java’s extensible protocol handling specification, a new protocol handler was created so that a URL can be specified for requesting a Zone Transfer (AXFR) request from a DNS server. The A records are recorded and used to build an import requisition. This is handy for organizations that use DNS (possibly coupled with an IP management tool) as the data base of record for nodes in the network. So, rather than ping sweeping the network or entering the nodes manually into OpenNMS Horizon Provisioning UI, nodes can be managed via 1 or more DNS servers. The format of the URL for this new protocol handler is:

dns://<host>[:port]/<zone>[/<foreign-source>/][?expression=<regex>]

dns://my-dns-server/myzone.com

This will import all A records from the host my-dns-server on port 53 (default port) from zone myzone.com and since the foreign source (a.k.a. the provisioning group) is not specified it will default to the specified zone.

You can also specify a subset of the A records from the zone transfer using a regular expression:

dns://my-dns-server/myzone.com/portland/?expression=^por-.*

This will import all nodes from the same server and zone but will only manage the nodes in the zone matching the regular expression ^port-.* and will and they will be assigned a unique foreign source (provisioning group) for managing these nodes as a subset of nodes from within the specified zone.

If your expression requires URL encoding (for example you need to use a ? in the expression) it must be properly encoded.

dns://my-dns-server/myzone.com/portland/?expression=^por[0-9]%3F

Currently, the DNS server requires to be setup to allow a zone transfer from the OpenNMS Horizon server. It is recommended that a secondary DNS server is running on OpenNMS Horizon and that the OpenNMS Horizon server be allowed to request a zone transfer. A quick way to test if zone transfers are working is:

dig -t AXFR @<dn5Server> <zone>

4.8. Adapters

The OpenNMS Horizon Provisiond API also supports provisioning adapters (plugins) for integration with external systems during the provisioning import phase. When node entities are added, updated, deleted, or receive a configuration management change event, OpenNMS Horizon will call the adapter for the provisioning activities with integrated systems.

Currently, OpenNMS Horizon supports the following adapters:

4.8.1. DDNS Adapter

The DDNS adapter uses the dynamic DNS protocol to update a DNS system as nodes are provisioned into OpenNMS Horizon.

To configure this adapter, edit the opennms.properties file and set the importer.adapter.dns.server property:

importer.adapter.dns.server=192.168.1.1

4.9. MetaData assigned to Nodes

A requisition can contain arbitrary metadata for each node, interface and service it contains. During provisioning, the metadata is copied to the model and persisted in the database.

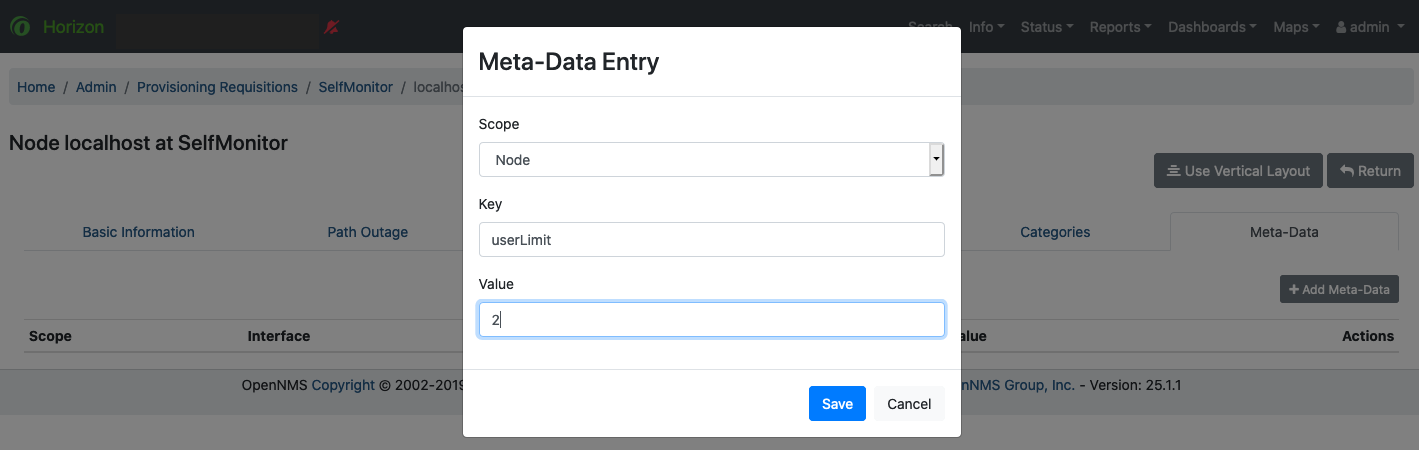

The Requisition UI allows to edit the metadata defined in a requisition.

The edit function in the Requisition UI is limited to only edit the context called requisition by intention.

All other contexts are reserved for future use by other provisioning-adapters and similar applications like asset-data.

While provisioning a requisition, the metadata from the requisition is transferred to the database and assigned to the nodes, interfaces and services accordingly.

4.9.1. User-defined contexts

If there is a requirement to add more contexts not managed by OpenNMS Horizon, the context name must be prefixed by X-.

Any third-party software must take care to choose a context name which is unique enough to not conflict with other software.

4.10. Fine Grained Provisioning Using provision.pl

provision.pl provides an example command-line interface to the provisioning-related OpenNMS Horizon REST API endpoints.

The script has many options but the first three optional parameters are described here:

You can use --help to the script to see all the available options.

|

--username (default: admin) --password (default: admin) --url (default: http://localhost:8980/opennms/rest)

4.10.1. Create a new requisition

provision.pl provides easy access to the requisition REST service using the requisition option:

${OPENNMS_HOME}/bin/provision.pl requisition customer1This command will create a new, empty (containing no nodes) requisition in OpenNMS Horizon.

The new requisition starts life in the pending state.

This allows you to iteratively build the requisition and then later actually import the nodes in the requisition into OpenNMS Horizon.

This handles all adds/changes/deletes at once.

So, you could be making changes all day and then at night either have a schedule in OpenNMS Horizon that imports the group automatically or you can send a command through the REST service from an outside system to have the pending requisition imported/reimported.

You can get a list of all existing requisitions with the list option of the provision.pl script:

${OPENNMS_HOME}/bin/provision.pl listCreate a new Node

${OPENNMS_HOME}/bin/provision.pl node add customer1 1 node-aThis command creates a node element in the requisition customer1 called node-a using the script’s node option. The node’s foreign-ID is 1 but it can be any alphanumeric value as long as it is unique within the requisition. Note the node has no interfaces or services yet.

Add an Interface Element to that Node

${OPENNMS_HOME}/bin/provision.pl interface add customer1 1 127.0.0.1This command adds an interface element to the node element using the interface option to the provision.pl command and it can now be seen in the pending requisition by running provision.pl requisition list customer1.

Add a Couple of Services to that Interface

${OPENNMS_HOME}/bin/provision.pl service add customer1 1 127.0.0.1 ICMP

${OPENNMS_HOME}/bin/provision.pl service add customer1 1 127.0.0.1 SNMPThis adds the 2 services to the specified 127.0.0.1 interface and is now in the pending requisition.

Set the Primary SNMP Interface

${OPENNMS_HOME}/bin/provision.pl interface set customer1 1 127.0.0.1 snmp-primary PThis sets the 127.0.0.1 interface to be the node’s Primary SNMP interface.

Add a couple of Node Categories

${OPENNMS_HOME}/bin/provision.pl category add customer1 1 Routers

${OPENNMS_HOME}/bin/provision.pl category add customer1 1 ProductionThis adds the two categories to the node and is now in the pending requisition.

These categories are case-sensitive but do not have to be already defined in OpenNMS Horizon. They will be created on the fly during the import if they do not already exist.

Setting Asset Fields on a Node

${OPENNMS_HOME}/bin/provision.pl asset add customer1 1 serialnumber 9999This will add value of 9999 to the asset field: serialnumber.

${OPENNMS_HOME}/bin/provision.pl requisition import customer1This will cause OpenNMS Horizon Provisiond to import the pending customer1 requisition.

The formerly pending requisition will move into the deployed state inside OpenNMS Horizon.

Very much the same as the add, except that a single delete command and a re-import is required. What happens is that the audit phase is run by Provisiond and it will be determined that a node has been removed from the requisition and the node will be deleted from the DB and all services will stop activities related to it.

${OPENNMS_HOME}/bin/provision.pl node delete customer1 1 node-a

${OPENNMS_HOME}/bin/provision.pl requisition import customer1This completes the life cycle of managing a node element, iteratively, in a import requisition.

4.11. Yet Other API Examples

The provision.pl script doesn’t supply this feature but you can get it via the REST API. Here is an example using curl:

#!/usr/bin/env bash

REQ=$1

curl -X GET -H "Content-Type: application/xml" -u admin:admin http://localhost:8980/opennms/rest/requisitions/$REQ 2>/dev/null | xmllint --format -4.12. SNMP Profiles

SNMP Profiles are prefabricated sets of SNMP configuration which are automatically "fitted" against eligible IP addresses at provisioning time. Each profile may have a unique label and an optional filter expression. If the filter expression is present, it will be evaluated to check whether a given IP address or reverse-lookup hostname passes the filter. A profile with a filter expression will be fitted to a given IP address only if the filter expression evaluates true against that IP address.

SNMP profiles can be added to snmp-config.xml to enable automatic fitting of SNMP interfaces.

<snmp-config xmlns="http://xmlns.opennms.org/xsd/config/snmp" write-community="private" read-community="public" timeout="800" retry="3">

<definition version="v1" ttl="6000">

<specific>127.0.0.1</specific>

</definition>

<profiles>

<profile version="v1" read-community="horizon" timeout="10000">

<label>profile1</label>

</profile>

<profile version="v1" ttl="6000">

<label>profile2</label>

<filter>iphostname LIKE '%opennms%'</filter>

</profile>

<profile version="v1" read-community="meridian">

<label>profile3</label>

<filter>IPADDR IPLIKE 172.1.*.*</filter>

</profile>

</profiles>

</snmp-config>In the above config,

-

profile1doesn’t have a filter expression. This profile will be tried for every interface. -

profile2has a filter expression that comparesiphostname(the hostname resulting from a reverse DNS lookup of the IP address being fitted) against a preconfigured value. This profile’s SNMP parameters will be fitted only against IP addresses whose hostname contains the stringopennms. -

profile3has an IPLIKE expression that matches all interfaces in the range specified in the filter. This profile’s SNMP parameters will be fitted only against IP addresses in the range specified by theIPLIKEexpression.

Profiles will be tried in the order they are configured.

The first match that produces a successful SNMP GET-REQUEST on the scalar instance of sysObjectID will be saved by Provisiond as the SNMP configuratoin definition to use for all future SNMP operations against the fitted IP address.

|

default as profile label is reserved for default SNMP config.

|

The opennms:snmp-fit Karaf shell command finds a matching profile for a given IP address and prints out the resulting config.

Matching or "fitting" an SNMP profile should be understood as passing the profile’s filter expression and success in getting the scalar sysObjectID instance.

$ ssh -p 8101 admin@localhost

...

admin@opennms()> opennms:snmp-fit -l MINION -s 172.1.1.105 (1)

admin@opennms()> opennms:snmp-fit 172.1.1.106 profile1 (2)

admin@opennms()> opennms:snmp-fit -s -n -f Switches 172.1.1.107 profile2 (3)| 1 | searches the profiles that fit the IP address 172.1.1.105 at location Minion and saves the resulting configuration as a definition for future use. |

| 2 | checks whether the profile with label profile1 is a fit for IP address 172.1.1.106, but does not save the resulting configuration if it is a fit. |

| 3 | checks whether the profile labeled profile2 is a fit for IP address 172.1.1.107; if so, it saves the resulting configuration and also sends a newSuspect event, telling OpenNMS Horizon to auto-provision the node at that IP address into the Switches requisition.

If it succeeds, it prints out the resulting agent config, but does not save any definition. |

The opennms:snmp-remove-from-definition Karaf shell command removes an IP address from the system-wide SNMP configuration definitions.

$ ssh -p 8101 admin@localhost ... admin@opennms()> opennms:snmp-remove-from-definition -l MINION 172.1.0.255

This removes IP address 172.1.0.255 at location MINION from the system-wide SNMP configuration so that this IP address can be fitted to a new profile.

This command might be useful when an IP address formerly assigned to an SNMPv2c-capable switch is reassigned to an SNMPv3-capable load balancer.

By default SnmpDetector doesn’t use SNMP profiles. Add property useSnmpProfiles and set it to true in order to use SNMP Profiles.

4.13. Auto Discovery with Detectors

Currently OpenNMS Horizon uses ICMP ping sweep to find IP address on the network.

The IP Ranges and specifics can be defined in discovery-configuration.xml as shown below.

<discovery-configuration xmlns="http://xmlns.opennms.org/xsd/config/discovery" packets-per-second="1"

initial-sleep-time="30000" restart-sleep-time="86400000" retries="1" timeout="2000">

<!-- see examples/discovery-configuration.xml for options -->

<specific>10.0.0.5</specific>

<include-range>

<begin>192.168.0.1</begin>

<end>192.168.0.254</end>

</include-range>

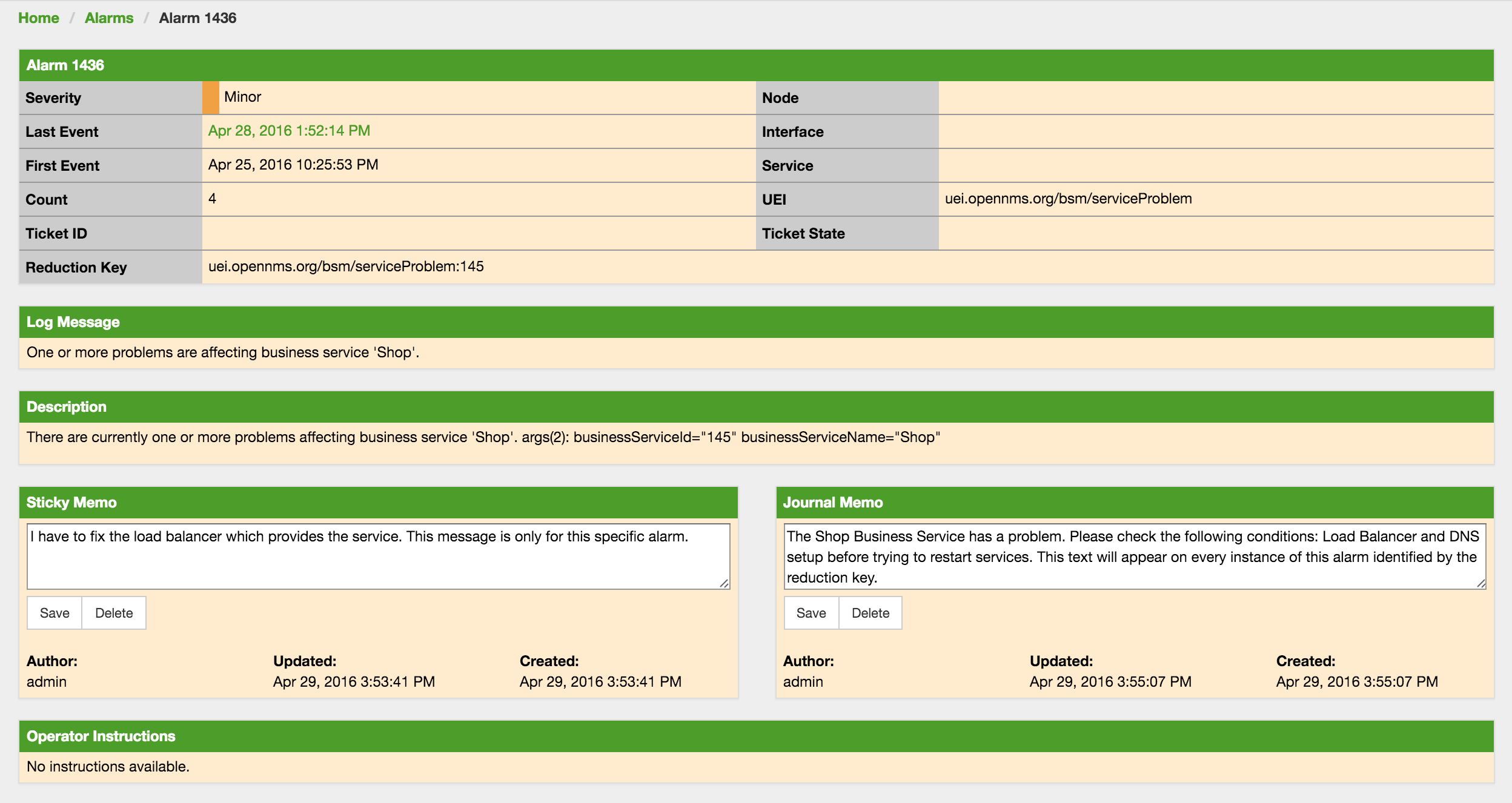

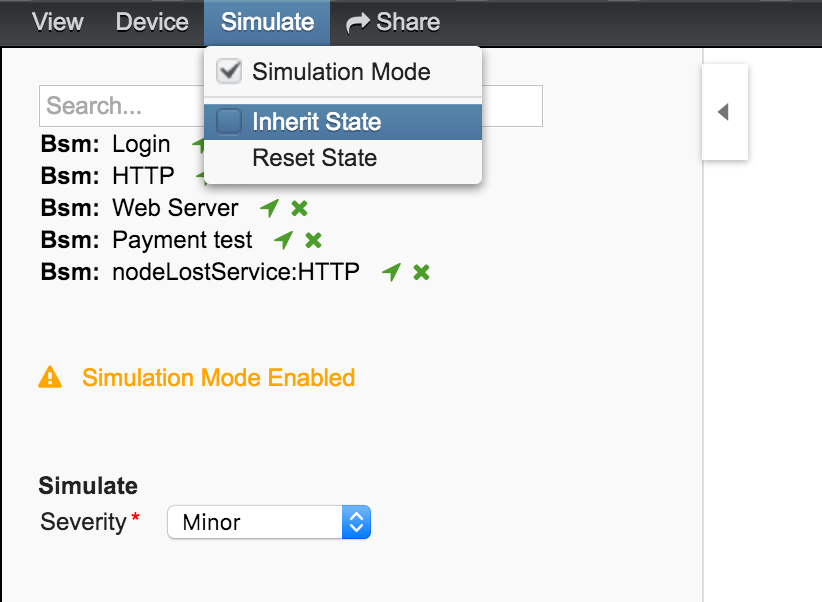

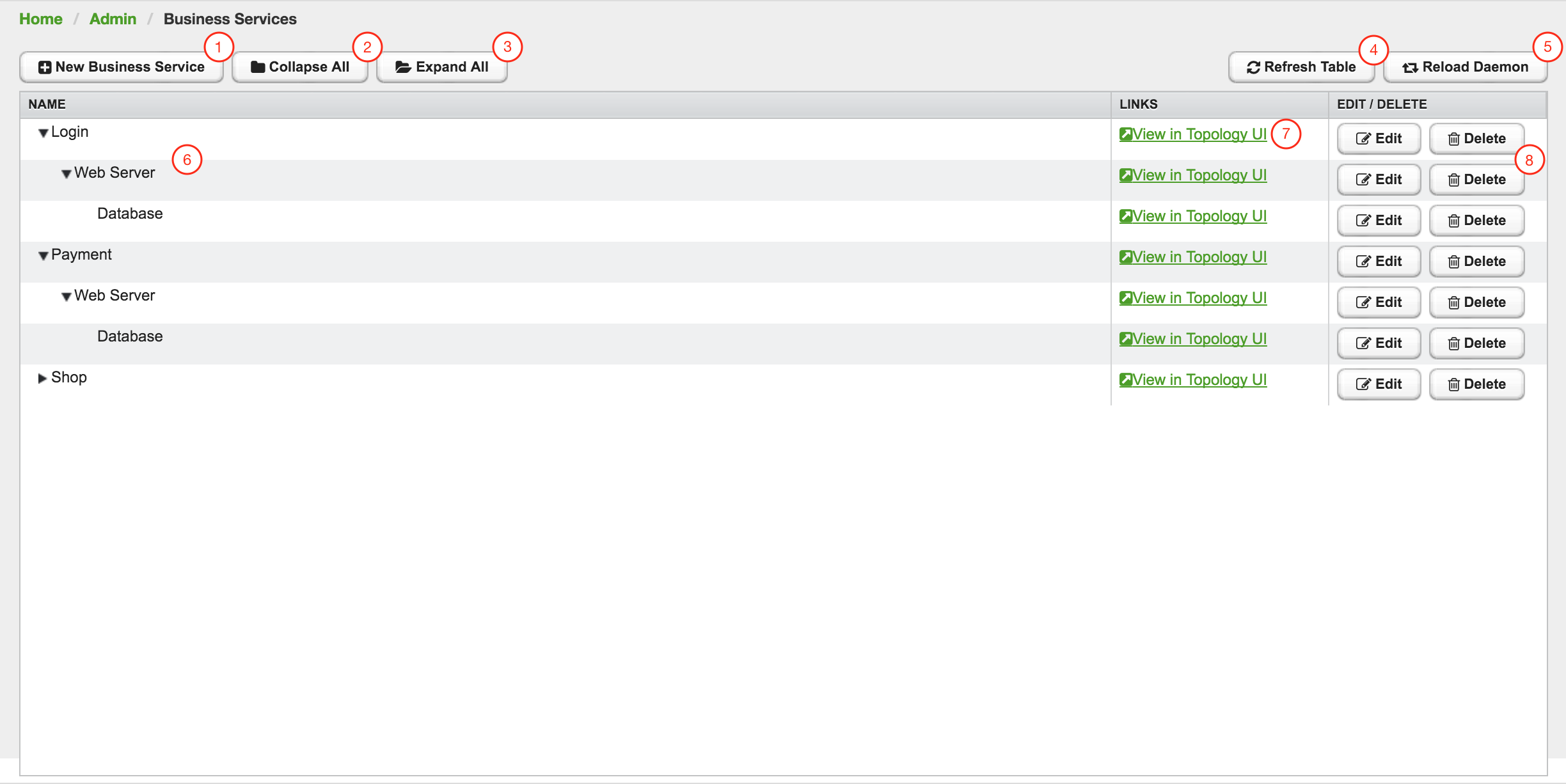

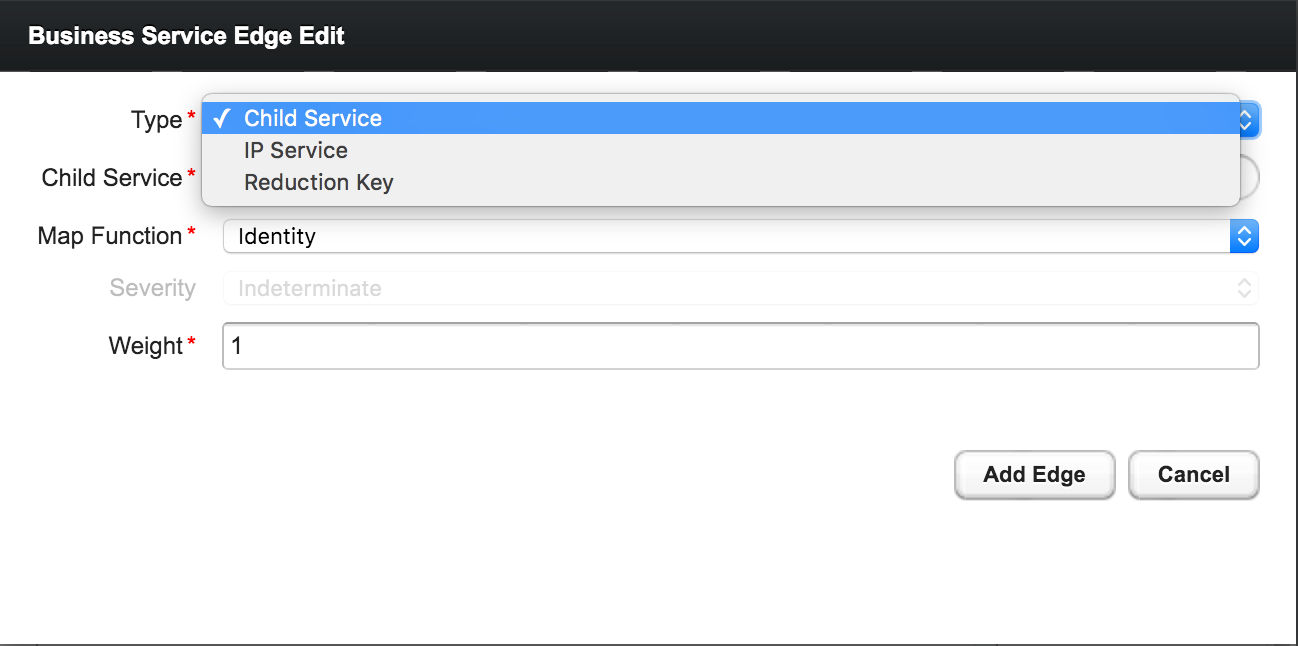

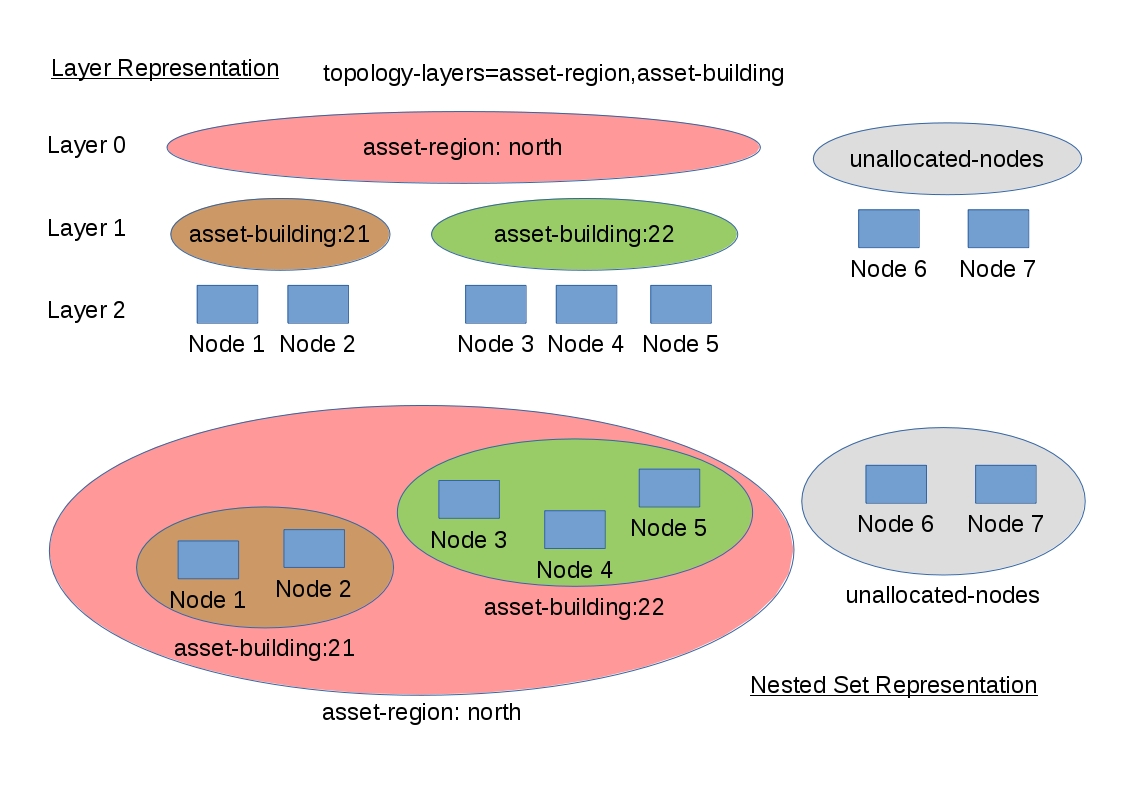

<include-url>file:/opt/opennms/etc/include.txt</include-url>